Archive

Maximum Adds per second for 1950s/early 1960s computers

Relative digital computer performance has been measured, since the mid-1960s, by timing how long it takes to execute one or more programs. Until the early 1990s Whetstone was widely used, and then SPEC brought things up to date.

Running the same program on multiple computers requires that it be written in a language that is available on those computers. Fortran, Cobol and Algol 60 started to spread at the start of the 1960s (there were 21 Algol 60 compilers were available in 1961), but it took a while for old habits to change, and for specific programs to be accepted as reasonable benchmarks.

One early performance comparison method involved calculating a sum of instruction timings, weighted by instruction frequency. The view of computers as calculating machines meant that the arithmetic instructions add/multiply/divide were often the focus of attention.

A calculation based on instructions assumes that timings do not vary with the value of the operand (which multiple and divide often do, and addition sometimes does), that instruction time can be measured independent of the time taken to load the values from memory (which is not possible for when one operand is always loaded from memory), and instruction frequency is representative of typical applications.

With regard to instruction timings, some manufacturers quoted an average, while others gave a range of values. One publication quotes arithmetic timings for specific numeric values. The “Data Processing Equipment Encyclopedia: Electronic Devices”, published in 1961 by Gille Associates, lists the characteristics of 104 computers, including the time taken to perform the arithmetic operations: addition 555555+555555, multiplication 555555*555555, and division 308641358025/555555. The results were mostly for fixed point, sometimes floating-point, or both, and once in double precision. In practice small numeric values dominate program execution. I suspect the publishers picked large values because customers think of computers as working on big/complicated problems.

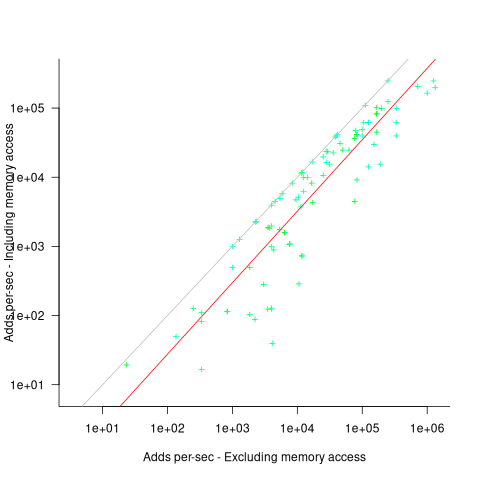

The time taken to load a value from memory can be a significant percentage of execution time, which is why processor cache has such a big impact on performance. In the 1950s main memory was often the cache, with the rest of memory held on a rotating drum. Hardware specifications often gave arithmetic instruction timings for both excluded and included memory access cases.

The plot below shows the execution time of the Add instruction excluding/including memory access on the same computer for pre-1961 computers, with regression line of the form:  (grey line shows

(grey line shows  ; code+data):

; code+data):

When memory access time is included in the Add instruction timing, the maximum rate of instructions per second decreases by approximately a factor of four, compared to when memory access time is excluded.

What was the frequency distribution of instructions executed by computers in the 1950s/1960s? I suspect it was a simplified form of today’s frequency distribution. Simplified in the sense of there being fewer variants of commonly used instructions and way fewer addressing modes.

Application domains were divided into scientific/engineering and commercial. One executed lots of float-point instructions, the other executed none. One did a lot of reading/writing of punched cards/magnetic tape, the other did hardly any. If we want to compare early the performance of cpus across the decades, methods that assume a significant amount of I/O have to be ignored, or the I/O component reverse engineered out.

Kenneth Knight, in his PhD thesis (no copy online), published the most detailed and extensive analysis, and data. Knight included an I/O component in his performance formula, but this was relatively small for scientific/engineering.

The table below lists the instruction weights for scientific/engineering applications published by Knight and Arbuckle, a Manager of Product Marketing at IBM:

Instruction or Operation Knight Arbuckle Floating Point Add/Sub 10% 9.5% Floating Point Multiply 6% 5.6% Floating Point Divide 2% 2.0% Fixed add/sub 10% Load/Store 28.5% Indexing 22.5% Conditional Branch 13.2% Miscellaneous 72% 18.7% |

Solomon published weights for the IBM 360 family. By focusing on a range of compatible computers the evaluation was not restricted to generic operations, and used timings from 60 different instructions.

The following analysis is based on data extracted from the 1955, 1961, and 1964 (which does not have a handy table of arithmetic instruction timings; thanks to Ed Thelen for converting the scanned images) surveys of domestic electronic digital computing systems published by the Ballistic Research Laboratory.

If the performance of computers from the 1950s/1960s is to be compared with performance in later decades, which computers from the 1950s/1960s should be included? Of the 228 computers listed in a January 1964 survey of the roughly 14k+ computing systems manufactured or operational, over 50% are bespoke, i.e., they are unique. The top 10 systems represent over 75% of manufactured systems; see table below (the IBM 604 was an electronic calculating punch, and is not listed):

Quantity SYSTEM Cumulative percentage

5,000+ IBM 1401 36%

2,500+ IBM 650 54%

693 IBM CPC 59%

490 LGP 30 63%

478 BURROUGHS B26O/B270/B280 66%

400+ LIBRATROL 500 69%

300+ BENDIX G-15 71%

300 CONTROL DATA 160A 73%

267 IBM 607 75%

210 BURROUGHS E103/E101 77% |

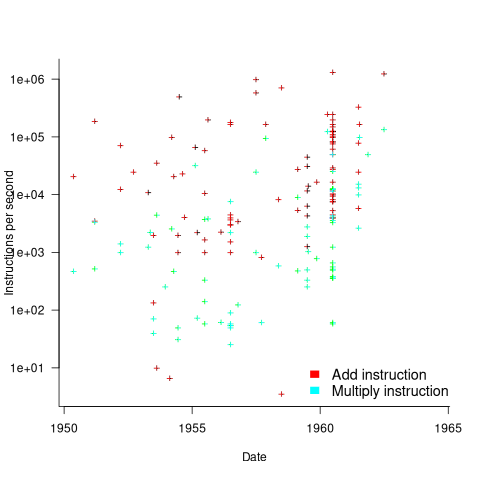

When programming in machine code, developers put a lot of effort into keeping frequently used values in registers (developers can still sometimes do a better job than compilers), and overlapping memory access with other operations. The plot below shows the maximum number of add and multiply instructions per second that could be executed without accessing storage (code+data):

The systems capably of less than ten instructions per second are essentially early desktop calculators.

What percentage of Add instructions accessed memory? As far as I can tell, none of the performance comparison reports/papers address with this question. To be continued…

Program analysis via information leakage

The use of software in high value transactions has created an interesting new field of software research that investigates the leakage of information from programs. The kind of information leaked, so-called sideband information, can take various forms, including:

- The amount of time taken to perform some operation. Many developers instinctively do their best to ensure that code does not take any longer to execute than it has to. In the case of one commonly used authentication system, the time taken to fail to authenticate an encryption key provided useful information on how close one trial encryption-key was compared to another (the closer the trial key to the actual key, the longer the authentication took to fail). The obvious implementation technique to foil this kind of attack is to add random delays into the authentication process.

It has even proved possible to perform timing attacks against a remote machine over the Internet to remote

- Use of some part of the value of secure information, by a system library function, to create the value passed back to the caller, e.g.,

if (secret_value & 0xf000) // Tell the caller that the top 'secret' four bits are set return 1; else return 0;

Researchers have been able to analyse the information flow of input values through some very large C programs.

- Analyse of network traffic routing information to work out who is talking to who. Various kinds of anonymizers have been created in attempt to make various forms of Internet traffic untraceable.

Any Internet program is accessible to information flow analysis. Using these techniques to analyse the search algorithm used by Google might be overly ambitious. A Google algorithm that might be within reach of is the one used by Adwords; the behavior of this algorithm is of interest to a growing number of people.

Information leakage techniques are becoming more widely known and developers working on programs containing a security component now need to consider how they can prevent information being leaked to attackers who sample program behavior looking for exploitable weaknesses.

Recent Comments