Archive

Fishing for software data

During 2021 I sent around 100 emails whose first line started something like: “I have been reading your interesting blog post…”, followed by some background information, and then a request for software engineering data. Sometimes the request for data was specific (e.g., the data associated with the blog post), and sometimes it was a general request for any data they might have.

So far, these 100 email requests have produced one two datasets. Around 80% failed to elicit a reply, compared to a 32% no reply for authors of published papers. Perhaps they don’t have any data, and don’t think a reply is worth the trouble. Perhaps they have some data, but it would be a hassle to get into a shippable state (I like this idea because it means that at least some people have data). Or perhaps they don’t understand why anybody would be interested in data and must be an odd-ball, and not somebody they want to engage with (I may well be odd, but I don’t bite :-).

Some of those who reply, that they don’t have any data, tell me that they don’t understand why I might be interested in data. Over my entire professional career, in many other contexts, I have often encountered surprise that data driven problem-solving increases the likelihood of reaching a workable solution. The seat of the pants approach to problem-solving is endemic within software engineering.

Others ask what kind of data I am interested in. My reply is that I am interested in human software engineering data, pointing out that lots of Open source is readily available, but that data relating to the human factors underpinning software development is much harder to find. I point them at my evidence-based book for examples of human centric software data.

In business, my experience is that people sometimes get in touch years after hearing me speak, or reading something I wrote, to talk about possible work. I am optimistic that the same will happen through my requests for data, i.e., somebody I emailed will encounter some data and think of me 🙂

What is different about 2021 is that I have been more willing to fail, and not just asking for data when I encounter somebody who obviously has data. That is to say, my expectation threshold for asking is lower than previous years, i.e., I am more willing to spend a few minutes crafting a targeted email on what appear to be tenuous cases (based on past experience).

In 2022 I plan to be even more active, in particular, by giving talks and attending lots of meetups (London based). If your company is looking for somebody to give an in-person lunchtime talk, feel free to contact me about possible topics (I’m always after feedback on my analysis of existing data, and will take a 10-second appeal for more data).

Software data is not commonly available because most people don’t collect data, and when data is collected, no thought is given to hanging onto it. At the moment, I don’t think it is possible to incentivize people to collect data (i.e., no saleable benefit to offset the cost of collecting it), but once collected the cost of hanging onto data is peanuts. So as well as asking for data, I also plan to sell the idea of hanging onto any data that is collected.

Fishing tips for software data welcome.

Christmas books for 2021

This year, my list of Christmas books is very late because there is only one entry (first published in 1950), and I was not sure whether a 2021 Christmas book post was worthwhile.

The book is “Planning in Practice: Essays in Aircraft planning in war-time” by Ely Devons. A very readable, practical discussion, with data, on the issues involved in large scale planning; the discussion is timeless. Check out second-hand book sites for low costs editions.

Why isn’t my list longer?

Part of the reason is me. I have not been motivated to find new topics to explore, via books rather than blog posts. Things are starting to change, and perhaps the list will be longer in 2022.

Another reason is the changing nature of book publishing. There is rarely much money to be made from the sale of non-fiction books, and the desire to write down their thoughts and ideas seems to be the force that drives people to write a book. Sites like substack appear to be doing a good job of diverting those with a desire to write something (perhaps some authors will feel the need to create a book length tomb).

Why does an author need a publisher? The nitty-gritty technical details of putting together a book to self-publish are slowly being simplified by automation, e.g., document formatting and proofreading. It’s a win-win situation to make newly written books freely available, at least if they are any good. The author reaches the largest readership (which helps maximize the impact of their ideas), and readers get a free electronic book. Authors of not very good books want to limit the number of people who find this out for themselves, and so charge money for the electronic copy.

Another reason for the small number of good new, non-introductory, books, is having something new to say. Scientific revolutions, or even minor resets, are rare (i.e., measured in multi-decades). Once several good books are available, and nothing much new has happened, why write a new book on the subject?

The market for introductory books is much larger than that for books covering advanced material. While publishers obviously want to target the largest market, these are not the kind of books I tend to read.

Parkinson’s law, striving to meet a deadline, or happenstance?

How many minutes past the hour was it, when you stopped working on some software related task?

There are sixty minutes in an hour, so if stop times are random, the probability of finishing at any given minute is 1-in-60. If practice (based on the 200k+ time records in the CESAW dataset) the probability of stopping on the hour is 1-in-40, and for stopping on the half-hour is 1-in-48.

Why are developers more likely to stop working on a task, on the hour or half-hour?

Is this a case of Parkinson’s law, or are developers striving to complete a task within a specified time, or are they stopping because a scheduled activity takes priority?

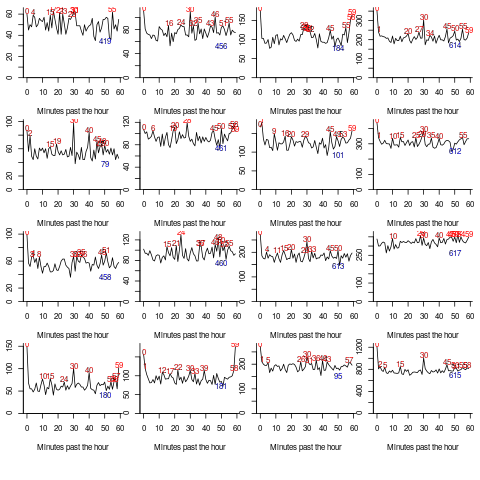

The plot below shows the number of times (y-axis) work on a task stopped on a given minute past the hour (x-axis), for 16 different software projects (project number in blue, with top 10 numbers in red, code+data):

Some projects have peaks at 50, 55, or thereabouts. Perhaps people are stopping because they have a meeting to attend, and a peak is visible because the project had lots of meetings, or no peak was visible because the project had few meetings. Some projects have a peak at 28 or 29, which might be some kind of time synchronization issue.

Is it possible to analyze the distribution of end minutes to reasonably infer developer project behavior, e.g., Parkinson’s law, striving to finish by a given time, or just not watching the clock?

An expected distribution pattern for both Parkinson’s law, and striving to complete, is a sharp decline of work stops after a reference time, e.g., end of an hour (this pattern is present in around ten of the projects plotted). A sharp increase in work stops prior to a reference time could also apply for both behaviors; stopping to switch to other work adds ‘noise’ to the distribution.

The CESAW data is organized by project, not developer, i.e., it does not list everything a developer did during the day. It is possible that end-of-hour work stops are driven by the need to synchronize with non-project activities, i.e., no Parkinson’s law or striving to complete.

In practice, some developers may sometimes follow Parkinson’s law, other times strive to complete, and other times not watch the clock. If models capable of separating out the behaviors were available, they might only be viable at the individual level.

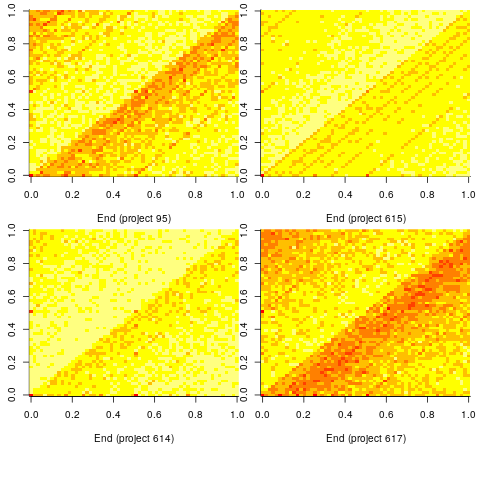

Stop time equals start time plus work duration. If people choose a round number for the amount of work time, there is likely to be some correlation between start/end minutes past the hour. The plot below shows heat maps for start fraction of hour (y-axis) against end fraction of hour (x-axis) for four projects (code+data):

Work durations that are exact multiples of an hour appear along the main diagonal, with zero/zero being the most common start/end pair (at 4% over all projects, with 0.02% expected for random start/end times). Other diagonal lines come from work durations that include a fraction of an hour, e.g., 30-minutes and 20-minutes.

For most work periods, the start minute occurs before the end minute, i.e., the work period does not cross an hour boundary.

What can be learned from this analysis?

The main takeaway is that there is a small bias for work start/end times to occur on the hour or half-hour, and other activities (e.g., meetings) cause ongoing work to be interrupted. Not exactly news.

More interesting ideas and suggestions welcome.

First understand the structure of a standard, then read it

Extracting useful information from the text in an ISO programming language standard first requires an understanding of the stylized English in which it is written.

I regularly encounter people who cite wording from the C Standard to back up their interpretation of a particular language construct. My first thought when this happens is: Do I want to spend the time explaining how the standard ‘works’ to get to the point of dealing with the topic being discussed?

I am not aware of any “How to read the C Standard” guide, or such a guide for any language.

Explaining enough detail to have a sensible conversation about the text takes, maybe, 10-30 minutes. The interpretation of text in any standard can depend on the section in which it occurs, and particular phrases can be specified to have different interpretations in different contexts. For instance, in the C Standard, a source code construct that violates a “shall” requirement specified in a “Constraints” section is about as serious as things get (i.e., it’s a mandatory compile time error), while violating a “shall” requirement specified outside a “Constraints” is undefined behavior (i.e., the compiler can do what it likes, including nothing).

New readers also get hung up on footnotes, which are a great source of confusion. Footnotes have no normative meaning; technically, they are classified as informative (their real use is providing the committee a means to include wording in the document to satisfy some interested party, without the risk of breaking the standard {because this text has no normative status}).

Sometimes a person familiar with the C++ Standard applies the interpretation rules they have learned to the C Standard. This can work in limited cases, but the fundamental differences between how the two documents are structured requires a reorientation of thinking. For instance, the C Standard specifies the behavior of source code (from which the behavior of implementations has to be inferred), while the C++ Standard specifies the behavior of implementations (from which the behavior of source code constructs has to be inferred), and the C++ Standard does not contain “Constraints” sections.

The general committee response, at least in WG14, to complaints that the language standard requires effort to understand, is that the standard is not intended as a tutorial. At least there is a prose document to read, there are forms of language specification that don’t provide this luxury. At a minimum, a language standard first needs to be read two or three times before trying to answer detailed questions.

In general, once somebody has learned to interpret one ISO Standard, the know-how does not transfer to other ISO language standards, but they have an appreciation of the need for such an understanding.

In theory, know-how is supposed to be transferable; part 2 of the ISO directives, Principles and rules for the structure and drafting of ISO and IEC documents, “… stipulates certain rules to be applied in order to ensure that they are clear, precise and unambiguous.” There are also the technical reports: Guidelines for the Preparation of Conformity Clauses in Programming Language Standards (published in 1990), which I suspect few people have read, even within the standards’ programming language community, and Guidelines for the preparation of programming language standards (unchanged since the fourth edition in 2003).

In practice: The Fortran and Cobol standards were written before people had any idea which rules might be appropriate; I think the Pascal standard appeared just before the rules were formalised. Also, all three standards were created by National bodies (US, US, and UK respectively) as National standards, and then ‘promoted’ as-is to be ISO standards. ADA was a DoD standard that got ‘promoted’, and very much did its own thing with regard to stylized English.

The post-1990 language standards visually look as if they follow the ISO rules in force at the time they were first written (Directives, part 2 is on its ninth edition), i.e., the titles of clauses match the clause numbering scheme specified by ISO rules, e.g., clause 3 specifies “Terms and definitions”. However, readers are going to need some cultural background on the use of the language by its community, to figure out the intent of the text. For instance, the 1990 revision of the Pascal Standard contains extensive use of “shall”, but it is not clear how this is to be interpreted; I used Pascal extensively for 10-years, but never studied its ISO standard, and reading it now with my C Standard expertise is a strange experience, e.g., familiar language “constraints” do not appear to be specified in the text, and the compiler does not appear to be required to flag anything.

Two of the pre-1990 language standards, Fortran and Cobol, were initially written in the 1960s, and read like they are from another age (probably because of the way they are laid out, and the fonts used). The differences are so obvious that any readers with prior experience are likely to understand that they are going to have to figure out the structure from scratch. The formatting of post-1990 Fortran Standards lacks the 1960s vibe.

Recent Comments