Archive

Giving engineers the freedom to create a customer lock-in Cloud

The Cloud looks like the next dominant platform for hosting applications.

What can a Cloud vendor do to lock customers in to their fluffy part of the sky?

I think that Microsoft showed the way with their network server protocols (in my view this occurred because of the way things evolved, not though any cunning plan for world domination). The EU/Microsoft judgment required Microsoft to document and license their server protocols; the purpose was to allow third-parties to product Microsoft server plug-compatible products. I was an advisor to the Monitoring trustee entrusted with monitoring Microsoft’s compliance and got to spend over a year making sure the documents could be implemented.

Once most the protocol documents were available in a reasonably presentable state (Microsoft originally considered the source code to be the documentation and even offered it to the EU commission to satisfy the documentation requirement; they eventually hire a team of several hundred to produce prose specifications), two very large hurdles to third party implementation became apparent:

- the protocols were a tangled mess of interdependencies; 100% compatibility required implementing all of them (a huge upfront cost),

- the specification of the error behavior (i.e., what happens when something goes wrong) was minimal, e.g., when something unexpected occurs one of the errors in

windows.his returned (when I last checked, 10 years ago, this file contained over 30,000 identifiers).

Third party plugins for Microsoft server protocols are not economically viable (which is why I think Microsoft decided to make the documents public, they had nothing to loose and could claim to be open).

A dominant cloud provider has the benefit of size, they have a huge good-enough code base. A nimbler, smaller, competitor will be looking for ways to attract customers by offering a better service in some area, which means finding a smaller, stand-alone, niche where they can add value. Widespread use of Open Source means everybody gets to see and use most of the code. The way to stop smaller competitors gaining a foothold is to make sure that the code hangs together as a whole, with no relatively stand-alone components that can be easily replaced. Mutual interdependencies and complexity creates a huge barrier to new market entrants and is in the best interests of dominant vendors (yes it creates extra costs for them, but these are the price for detering competitors).

Engineers will create intendependencies between components and think nothing of it; who does not like easy solutions to problems and this one dependency will not hurt will it? Taking the long term view, and stopping engineers taking short cuts for short term gain, requires a lot of effort; who could fault a Cloud vendor for allowing mutual interdependencies and complexity to accumulate over time.

Error handling is a very important topic that rarely gets the attention it deserves, nobody likes to talk about the situation where things go wrong. Error handling is the iceberg of application development, while the code is often very mundane, its sheer volume (it can be 90% of the code in an application) creates a huge lock-in. The circumstances under which a system handles raises an error and the feasible recovery paths are rarely documented in any detail, it is something that developers working at the coal face learn by trial and error.

Any vendor looking to poach customers first needs to make sure they don’t raise any errors that the existing application does not handle and second any errors they do raise need to be solvable using the known recovery paths. Even if there is error handling information available to enable third-parties to duplicate responses, the requirement to duplicate severely hampers any attempt to improve on what already exists (apart from not raising the errors in the first place).

To create an environment for customer lock-in, Cloud vendors need to encourage engineers to keep doing what engineers love to do: adding new features and not worrying about existing spaghetti code.

Ability to remember code improves with experience

What mental abilities separate an expert from a beginner?

In the 1940s de Groot studied expertise in Chess. Players were shown a chess board containing various pieces and then asked to recall the locations of the pieces. When the location of the chess pieces was consistent with a likely game, experts significantly outperformed beginners in correct recall of piece location, but when the pieces were placed at random there was little difference in recall performance between experts and beginners. Also players having the rank of Master were able to reconstruct the positions almost perfectly after viewing the board for just 5 seconds; a recall performance that dropped off sharply with chess ranking.

The interpretation of these results (which have been duplicated in other areas) is that experts have learned how to process and organize information (in their field) as chunks, allowing them to meaningfully structure and interpret board positions; beginners don’t have this ability to organize information and are forced to remember individual pieces.

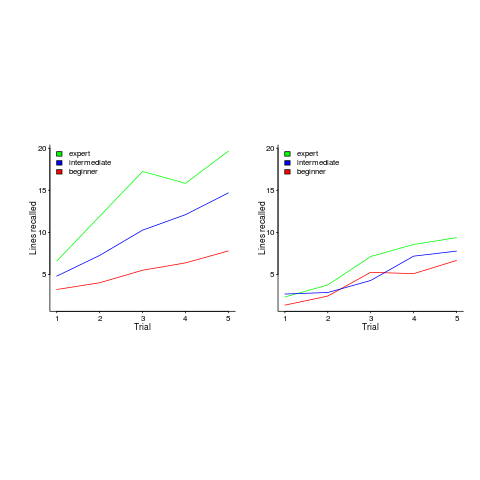

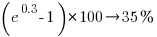

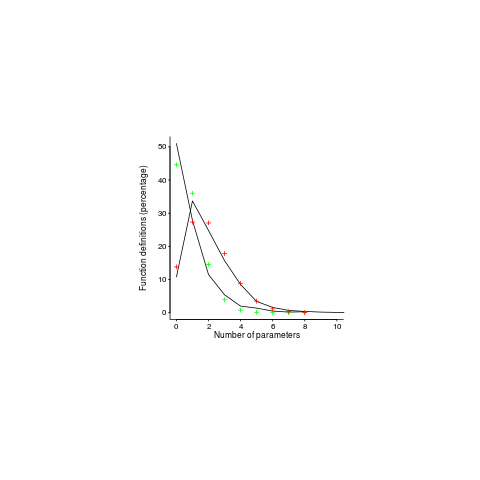

In 1981 McKeithen, Reitman, Rueter and Hirtle repeated this experiment, but this time using 31 lines of code and programmers of various skill levels. Subjects were given two minutes to study 31 lines of code, followed by three minutes to write (on a blank sheet of paper) all the code they could recall; this process was repeated five times (for the same code). The plot below shows the number of lines correctly recalled by experts (2,000+ hours programming experience), intermediates (just finished programming course) and beginners (just started programming course), left performance using ‘normal’ code and right is performance viewing code created by randomizing lines from ‘normal’ code; only the mean values in each category are available (code+data):

Experts start off remembering more than beginners and their performance improves faster with practice.

Compared to the Power law of practice (where experts should not get a lot better, but beginners should improve a lot), this technique is a much less time consuming way of telling if somebody is an expert or beginner; it also has the advantage of not requiring any application domain knowledge.

If you have 30 minutes to spare, why not test your ‘expertise’ on this code (the .c file, not the .R file that plotted the figure above). It’s 40 odd lines of C from the Linux kernel. I picked C because people who know C++, Java, PHP, etc should have no trouble using existing skills to remember it. What to do:

- You need five blank sheets of paper, a pen, a timer and a way of viewing/not viewing the code,

- view the code for 2 minutes,

- spend 3 minutes writing down what you remember on a clean sheet of paper,

- repeat until done 5 times.

Count how many lines you correctly wrote down for each iteration (let’s not get too fussed about exact indentation when comparing) and send these counts to me (derek at the primary domain used for this blog), plus some basic information on your experience (say years coding in language X, years in Y). It’s anonymous, so don’t include any identifying information.

I will wait a few weeks and then write up the data o this blog, as well as sharing the data.

Update: The first bug in the experiment has been reported. It takes longer than 3 minutes to write out all the code. Options are to stick with the 3 minutes or to spend more time writing. I will leave the choice up to you. In a test situation, maximum time is likely to be fixed, but if you have the time and want to find out how much you remember, go for it.

Uncertainty in data causes inconsistent models to be fitted

Does software development benefit from economies of scale, or are there diseconomies of scale?

This question is often expressed using the equation:  . If

. If  is less than one there are economies of scale, greater than one there are diseconomies of scale. Why choose this formula? Plotting project effort against project size, using logs scales, produces a series of points that can be sort-of reasonably fitted by a straight line; such a line has the form specified by this equation.

is less than one there are economies of scale, greater than one there are diseconomies of scale. Why choose this formula? Plotting project effort against project size, using logs scales, produces a series of points that can be sort-of reasonably fitted by a straight line; such a line has the form specified by this equation.

Over the last 40 years, fitting a collection of points to the above equation has become something of a rite of passage for new researchers in software cost estimation; values for  have ranged from 0.6 to 1.5 (not a good sign that things are going to stabilize on an agreed value).

have ranged from 0.6 to 1.5 (not a good sign that things are going to stabilize on an agreed value).

This article is about the analysis of this kind of data, in particular a characteristic of the fitted regression models that has been baffling many researchers; why is it that the model fitted using the equation  is not consistent with the model fitted using

is not consistent with the model fitted using  , using the same data. Basic algebra requires that the equality

, using the same data. Basic algebra requires that the equality  be true, but in practice there can be large differences.

be true, but in practice there can be large differences.

The data used is Data set B from the paper Software Effort Estimation by Analogy and Regression Toward the Mean (I cannot find a pdf online at the moment; Code+data). Another dataset is COCOMO 81, which I analysed earlier this year (it had this and other problems).

The difference between  and

and  is a result of what most regression modeling algorithms are trying to do; they are trying to minimise an error metric that involves just one variable, the response variable.

is a result of what most regression modeling algorithms are trying to do; they are trying to minimise an error metric that involves just one variable, the response variable.

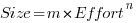

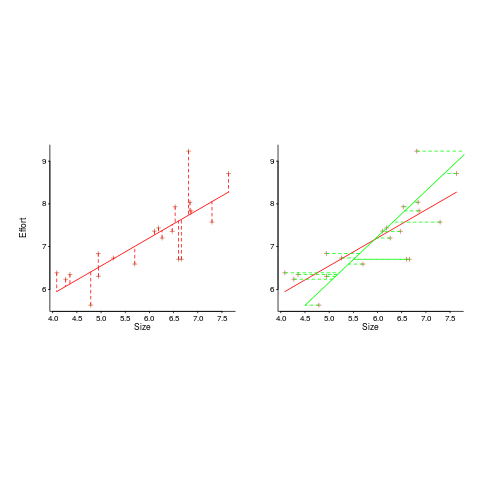

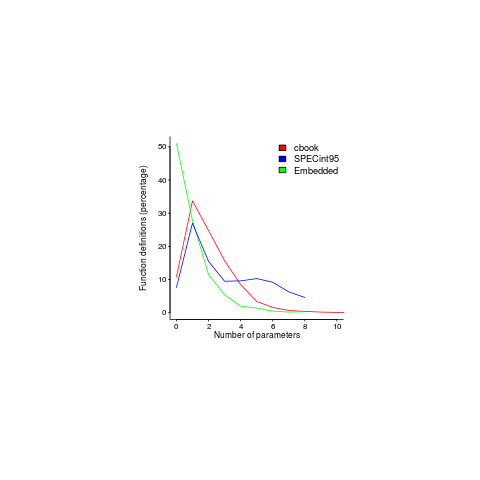

In the plot below left a straight line regression has been fitted to some Effort/Size data, with all of the error assumed to exist in the  values (dotted red lines show the residual for each data point). The plot on the right is another straight line fit, but this time the error is assumed to be in the

values (dotted red lines show the residual for each data point). The plot on the right is another straight line fit, but this time the error is assumed to be in the  values (dotted green lines show the residual for each data point, with red line from the left plot drawn for reference). Effort is measured in hours and Size in function points, both scales show the

values (dotted green lines show the residual for each data point, with red line from the left plot drawn for reference). Effort is measured in hours and Size in function points, both scales show the  of the actual value.

of the actual value.

Regression works by assuming that there is NO uncertainty/error in the explanatory variable(s), it is ALL assumed to exist in the response variable. Depending on which variable fills which role, slightly different lines are fitted (or in this case noticeably different lines).

Does this technical stuff really make a difference? If the measurement points are close to the fitted line (like this case), the difference is small enough to ignore. But when measurements are more scattered, the difference may be too large to ignore. In the above case, one fitted model says there are economies of scale (i.e.,  ) and the other model says the opposite (i.e.,

) and the other model says the opposite (i.e.,  , diseconomies of scale).

, diseconomies of scale).

There are several ways of resolving this inconsistency:

- conclude that the data contains too much noise to sensibly fit a a straight line model (I think that after removing a couple of influential observations, a quadratic equation might do a reasonable job; I know this goes against 40 years of existing practice of do what everybody else does…),

- obtain information about other important project characteristics and fit a more sophisticated model (characteristics of one kind or another are causing the variation seen in the measurements). At the moment

information is being used to explain all of the variance in the data, which cannot be done in a consistent way,

information is being used to explain all of the variance in the data, which cannot be done in a consistent way, - fit a model that supports uncertainty/error in all variables. For these measurements there is uncertainty/error in both

and

and  ; writing the same software using the same group of people is likely to have produced slightly different Effort/Size values.

; writing the same software using the same group of people is likely to have produced slightly different Effort/Size values.

There are regression modeling techniques that assume there is uncertainty/error in all variables. These are straight forward to use when all variables are measured using the same units (e.g., miles, kilogram, etc), but otherwise require the user to figure out and specify to the model building process how much uncertainty/error to attribute to each variable.

In my Empirical Software Engineering book I recommend using simex. This package has the advantage that regression models can be built using existing techniques and then ‘retrofitted’ with a given amount of standard deviation in specific explanatory variables. In the code+data for this problem I assumed 10% measurement uncertainty, a number picked out of thin air to sound plausible (its impact is to fit a line midway between the two extremes seen in the right plot above).

Pre-Internet era books that have not yet been bettered

It is a surprise to some that there are books written before the arrival of the Internet (say 1995) that have not yet been improved on. The list below is based on books I own and my thinking that nothing better has been published on that topic may be due to ignorance on my part or personal bias. Suggestions and comments welcome.

Before the Internet the only way to find new and interesting books was to visit a large book shop. In my case these were Foyles, Dillons (both in central London) and Computer Literacy (in Silicon valley).

Foyles was the most interesting shop to visit. Its owner was somewhat eccentric, books were grouped by publisher and within these subgroups alphabetic by author, and they stocked one of everything (many decades before Amazon’s claim to fame of stocking the long tail, but unlike Amazon they did not have more than one of the popular books). The lighting was minimal, every available space was piled with books (being tall was necessary to reach some books), credit card payment had to be transacted through a small window in the basement reached via creaky stairs or a 1930’s lift. A visit to the computer section at Foyles, which back in the day held more computer books than any other shop I have ever visited, was an afternoon’s experience (the end result of tight fisted management, not modern customer experience design), including the train journey home with a bundle of interesting books. Today’s Folyes has sensible lighting, a Coffee shop and 10% of the computer books it used to have.

When they can be found, these golden oldies are often available for less than the cost of the postage. Sometimes there are republished versions that are cheaper/more expensive. All of the books below were originally published before 1995. I have listed the ISBN for the first edition when there is a second edition (it can be difficult to get Amazon to list first editions when later editions are available).

“Chaos and Fractals” by Peitgen, Jürgens and Saupe ISBN 0387979034. A very enjoyable months reading. A second edition came out around 2004, but does not look to be that different from the 1992 version.

“The Terrible Truth About Lawyers” by Mark H. McCormack, ISBN 0002178699. Very readable explanation of how to deal with lawyers.

“Understanding Comics: The Invisible Art” by Scott McCloud. A must read for anybody interested in producing code that is easy to understand.

“C: A Reference Manual” by Samuel P. Harbison and Guy L. Steele Jr, ISBN 0-13-110008-4. Get the first edition from 1984, subsequent editions just got worse and worse.

“NTC’s New Japanese-English Character Dictionary” by Jack Halpern, ISBN 0844284343. If you love reading dictionaries you will love this.

“Data processing technology and economics” by Montgomery Phister. Technical details covering everything you ever wanted to know about the world of 1960’s computers; a bit of a specialist interest, this one.

I ought to mention “Godel, Escher, Bach” by D. Hofstadter, which I never rated but lots of other people enjoyed.

Software architect is an illegal job title in the UK

If you are working in the UK with the job title “software architect”, or styling yourself as such, you are breaking the law. Yes, you are committing an offense under: Architects Act 1997 Part IV Section 20. In particular: “(1) A person shall not practise or carry on business under any name, style or title containing the word “architect” unless he is a person registered [F1 in Part 1 of the Register].”

The Architecture Registration Board are happy to take £142 off you, ever year, for the privilege of using architect in your job title. There is also the matter of a Part 3 examination; don’t know what that is.

If you really do like the word architect in your job title and don’t want to pay £142 a year, you could move into another line of business: “(2) Subsection (1) does not prevent any use of the designation “naval architect”, “landscape architect” or “golf-course architect”.” I am assuming that the he in the wording also applies to she‘s and that a sex change will not help.

Do building architects care? I suspect not. Are the police going to do anything about it? Well, if they don’t like you and are looking for some way of hauling you before the courts, the fine is not that bad.

Fortran 2008 Standard has been updated

An updated version of ISO/IEC 1539-1 Information technology — Programming languages — Fortran — Part 1: Base language has just been published. So what has JTC1/SC22/WG5 been up to?

This latest document is bug a release of the 2010 standard, known as Fortran 2008 (because the ANSI Standard from which the ISO Standard was derived, sed -e "s/ANSI/ISO/g" -e "s/National/International/g", was published in 2008) and incorporates all the published corrigenda. I must have been busy in 2008, because I did not look to see what had changed.

Actually the document I am looking at is the British Standard. BSI don’t bother with sed, they just glue a BSI Standards Publication page on the front and add BS to the name, i.e., BS ISO/IEC 1539-1:2010.

The interesting stuff is in Annex B, “Deleted and obsolescent features” (the new features are Fortranized versions of languages features you have probable seen elsewhere).

Programming language committees are known for issuing dire warnings that various language features are obsolescent and likely to be removed in a future revision of the standard, but actually removing anything is another matter.

Well, the Fortran committee have gone and deleted six features! Why wasn’t this on the news? Did the committee foresee the 2008 financial crisis and decide to sneak out the deletions while people were looking elsewhere?

What constructs cannot now appear in conforming Fortran programs?

- “Real and double precision DO variables. .. A similar result can be achieved by using a DO construct with no loop control and the appropriate exit test.”

What other languages call a for-loop, Fortran calls a DO loop. So loop control variables can no longer have a floating-point type.

- “Branching to an END IF statement from outside its block.”

An if-statement is terminated by the token sequence

END IF, which may have an optional label. It is no longer possible toGOTOthat label from outside the block of the if-statement. You are going to have to label the statement after it. - “PAUSE statement.”

This statement dates from the days when a computer (singular, not plural) had its own air-conditioned room and a team of operators to tend its every need. A

PAUSEstatement would cause a message to appear on the operators’ console and somebody would be dispatched to check the printer was switched on and had paper, or some such thing, and they would then resume execution of the paused program.I think WG5 has not seen the future here. Isn’t the

PAUSEstatement needed again for cloud computing? I’m sure that Amazon would be happy to quote a price for having an operator respond to aPAUSEstatement. - “ASSIGN and assigned GO TO statements and assigned format specifiers.”

No more assigning labels to variables and GOTOing them, as a means of leaping around 1,000 line functions. This modern programming practice stuff is a real killjoy.

- “H edit descriptor.”

First programmers stopped using punched cards and now the H edit descriptor have been removed from Fortran; Herman Hollerith no longer touches the life of working programmers.

In the good old days real programmers wrote

11HHello World. Using quote delimiters for string literals is for pansies. - “Vertical format control. … There was no standard way to detect whether output to a unit resulted in this vertical format control, and no way to specify that it should be applied; this has been deleted. The effect can be achieved by post-processing a formatted file.”

Don’t panic, C still supports the

\vescape sequence.

Student projects for 2016/2017

This is the time of year when students have to come up with an idea for their degree project. I thought I would suggest a few interesting ideas related to software engineering.

- The rise and fall of software engineering myths. For many years a lot of people (incorrectly) believed that there existed a 25-to-1 performance gap between the best/worst software developers (its actually around 5 to 1). In 1999 Lutz Prechelt wrote a report explaining out how this myth came about (somebody misinterpreted values in two tables and this misinterpretation caught on to become the dominant meme).

Is the 25-to-1 myth still going strong or is it dying out? Can anything be done to replace it with something closer to reality?

One of the constants used in the COCOMO effort estimation model is badly wrong. Has anybody else noticed this?

- Software engineering papers often contain trivial mathematical mistakes; these can be caused by cut and paste errors or mistakenly using the values from one study in calculations for another study. Simply consistency checks can be used to catch a surprising number of mistakes, e.g., the quote “8 subjects aged between 18-25, average age 21.3” may be correct because 21.3*8 == 170.4, ages must add to a whole number and the values 169, 170 and 171 would not produce this average.

The Psychologies are already on the case of Content Mining Psychology Articles for Statistical Test Results and there is a tool, statcheck, for automating some of the checks.

What checks would be useful for software engineering papers? There are tools available for taking pdf files apart, e.g., qpdf, pdfgrep and extracting table contents.

- What bit manipulation algorithms does a program use? One way of finding out is to look at the hexadecimal literals in the source code. For instance, source containing

0x33333333,0x55555555,0x0F0F0F0Fand0x0000003Fin close proximity is likely to be counting the number of bits that are set, in a 32 bit value.Jörg Arndt has a great collection of bit twiddling algorithms from which hex values can be extracted. The numbers tool used a database of floating-point values to try and figure out what numeric algorithms source contains; I’m sure there are better algorithms for figuring this stuff out, given the available data.

Feel free to add suggestions in the comments.

Does public disclosure of vulnerabilities improve vendor response?

Does public disclosure of vulnerabilities in vendor products result in them releasing a fix more quickly, compared to when the vulnerability is only disclosed to the vendor (i.e., no public disclosure)?

A study by Arora, Krishnan, Telang and Yang investigated this question and made their data available 🙂 So what does the data have to say (its from the US National Vulnerability Database over the period 2001-2003)?

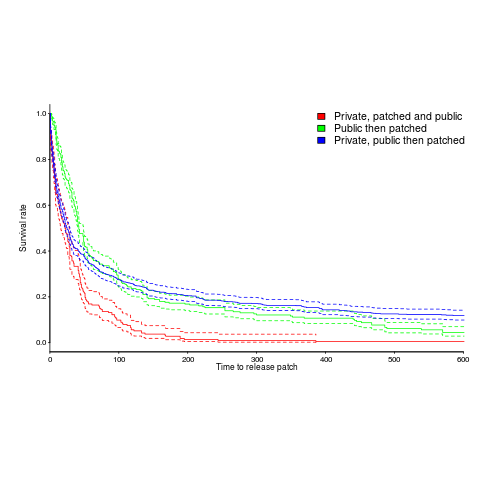

The plot below is a survival curve for disclosed vulnerabilities, the longer it takes to release a patch to fix a vulnerability, the longer it survives.

There is a popular belief that public disclosure puts pressure on vendors to release patchs more quickly, compared to when the public knows nothing about the problem. Yet, the survival curve above clearly shows publically disclosed vulnerabilities surviving longer than those only disclosed to the vendor. Is the popular belief wrong?

Digging around the data suggests a possible explanation for this pattern of behavior. Those vulnerabilities having the potential to cause severe nastiness tend not to be made public, but go down the path of private disclosure. Vendors prioritize those vulnerabilities most likely to cause the most trouble, leaving the less troublesome ones for another day.

This idea can be checked by building a regression model (assuming the necessary data is available and it is). In one way or another a lot of the data is censored (e.g., some reported vulnerabilities were not patched when the study finished); the Cox proportional hazards model can handle this (in fact, its the ‘standard’ technique to use for this kind of data).

This is a time dependent problem, some vulnerabilities start off being private and a public disclosure occurs before a patch is released, so there are some complications (see code+data for details). The first half of the output generated by R’s summary function, for the fitted model, is as follows:

Call:

coxph(formula = Surv(patch_days, !is_censored) ~ cluster(ID) +

priv_di * (log(cvss_score) + y2003 + log(cvss_score):y2002) +

opensource + y2003 + smallvendor + log(cvss_score):y2002,

data = ISR_split)

n= 2242, number of events= 2081

coef exp(coef) se(coef) robust se z Pr(>|z|)

priv_di 1.64451 5.17849 0.19398 0.17798 9.240 < 2e-16 ***

log(cvss_score) 0.26966 1.30952 0.06735 0.07286 3.701 0.000215 ***

y2003 1.03408 2.81253 0.07532 0.07889 13.108 < 2e-16 ***

opensource 0.21613 1.24127 0.05615 0.05866 3.685 0.000229 ***

smallvendor -0.21334 0.80788 0.05449 0.05371 -3.972 7.12e-05 ***

log(cvss_score):y2002 0.31875 1.37541 0.03561 0.03975 8.019 1.11e-15 ***

priv_di:log(cvss_score) -0.33790 0.71327 0.10545 0.09824 -3.439 0.000583 ***

priv_di:y2003 -1.38276 0.25089 0.12842 0.11833 -11.686 < 2e-16 ***

priv_di:log(cvss_score):y2002 -0.39845 0.67136 0.05927 0.05272 -7.558 4.09e-14 *** |

The explanatory variable we are interested in is priv_di, which takes the value 1 when the vulnerability is privately disclosed and 0 for public disclosure. The model coefficient for this variable appears at the top of the table and is impressively large (which is consistent with popular belief), but at the bottom of the table there are interactions with other variable and the coefficients are less than 1 (not consistent with popular belief). We are going to have to do some untangling.

cvss_score is a score, assigned by NIST, for the severity of vulnerabilities (larger is more severe).

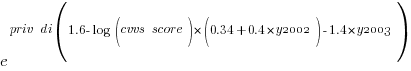

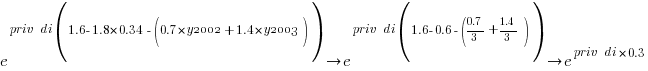

The following is the component of the fitted equation of interest:

where:  is 0/1,

is 0/1,  varies between 0.8 and 2.3 (mean value 1.8),

varies between 0.8 and 2.3 (mean value 1.8),  and

and  are 0/1 in their respective years.

are 0/1 in their respective years.

Applying hand waving to average away the variables:

gives a (hand waving mean) percentage increase of  , when

, when priv_di changes from zero to one. This model is saying that, on average, patches for vulnerabilities that are privately disclosed take 35% longer to appear than when publically disclosed

The percentage change of patch delivery time for vulnerabilities with a low cvvs_score is around 90% and for a high cvvs_score is around 13% (i.e., patch time of vulnerabilities assigned a low priority improves a lot when they are publically disclosed, but patch time for those assigned a high priority is slightly improved).

I have not calculated 95% confidence bounds, they would be a bit over the top for the hand waving in the final part of the analysis. Also the general quality of the model is very poor; Rsquare= 0.148 is reported. A better model may change these percentages.

Has the situation changed in the 15 years since the data used for this analysis? If somebody wants to piece the necessary data together from the National Vulnerability Database, the code is ready to go (ok, some of the model variables may need updating).

Update: Just pushed a model with Rsquare= 0.231, showing a 63% longer patch time for private disclosure.

p-values in software engineering

Data relating to software engineering activities is starting to become common and the results of any statistical analysis of data will include something known as the p-value.

Most of the time having a p-value below some cut-off value is a good thing, but sometimes good things occur when the value is above the cut-off (see p-values for programmers for details about what the p-value is).

A commonly encountered cut-off value is 0.05 (sometimes written as 5%).

Where did this 0.05 come from? It was first proposed in 1920s by Ronald Fisher. Fisher’s Statistical Methods for Research Workers and later Statistical Tables for Biological, Agricultural, and Medical Research had a huge impact and a p-value cut-off of 0.05 became enshrined as the magic number.

To quote Fisher: “Either there is something in the treatment, or a coincidence has occurred such as does not occur more than once in twenty trials.”

Once in twenty was a reasonable level for an event occurring by chance (rather than as a result of some new fertilizer or drug) in an experiment in biological, agricultural or medical research in 1900s. Is it a reasonable level for chance events in software engineering?

A one in twenty chance of a new technique resulting in a building falling down would not be considered acceptable in civil engineering. In high energy physics a p-value of  is used to decide whether a new particle has been discovered (or not).

is used to decide whether a new particle has been discovered (or not).

In business p-values should be treated as part of cost/benefit analysis. How confident are we that this effect is for real, how much would it cost to be right or wrong about it? Using a cut-off value to make yes/no decisions (e.g., 0.049 yes, 0.051 no) is very simplistic decision making.

To get a paper published in a software engineering journal requires any data analysis to have p-values below 0.05. In this regard the editors are aping journals in the social sciences; in fact the high impact social science journals require p-values below 0.01 (the high impact journals receive more submissions and can afford to be choosier about what they publish).

What is a sensible choice for a p-value cur-off in software engineering journals? The simple answer is: As low as possible, given the need to accept  papers per month for publication. A more complicated answer would involve different cut-offs for different kinds of measurements, e.g., measuring people or measuring code.

papers per month for publication. A more complicated answer would involve different cut-offs for different kinds of measurements, e.g., measuring people or measuring code.

While the p-value attracts plenty of criticism, there is nothing wrong with p-values. Use of p-values has a dominant market position in statistics and they are frequently misused by the clueless and those wanting to mislead their audience. Any other technique is just as likely to be misused, if not more so.

The killer phrase associated with p-values is “statistically significant”, often abbreviated to just “significant”. How people love to describe the results of their measurements as being shown to be “significant”. Of course, I am free to choose whatever p-value cut-off I like for my experiments and then claim the results are significant. I have had researchers repeatedly tell me that their results were “significant”, every time I asked them about p-values; a serious red flag.

When dealing with statistical results, ask yourself what the reported p-values mean to you. Don’t accept the 0.05 is the cut-off that everybody uses nonsense. If the research won’t reveal actual p-values, walk away from the snake oil.

Recent Comments