Archive

My 2025 in software engineering

Unrelenting talk of LLMs now infests all the software ecosystems I frequent.

- Almost all the papers published (week) daily on the Software Engineering arXiv have an LLM themed title. Way back when I read these LLM papers, they seemed to be more concerned with doing interesting things with LLMs than doing software engineering research.

- Predictions of the arrival of AGI are shifting further into the future. Which is not difficult given that a few years ago, people were predicting it would arrive within 6-months. Small percentage improvements in benchmark scores are trumpeted by all and sundry.

- Towards the end of the year, articles explaining AI’s bubble economics, OpenAI’s high rate of loosing money, and the convoluted accounting used to fund some data centers, started appearing.

Coding assistants might be great for developer productivity, but for Cursor/Claude/etc to be profitable, a significant cost increase is needed.

Will coding assistant companies run out of money to lose before their customers become so dependent on them, that they have no choice but to pay much higher prices?

With predictions of AGI receding into the future, a new grandiose idea is needed to fill the void. Near the end of the year, we got to hear people who must know it’s nonsense claiming that data centers in space would be happening real soon now.

I attend one or two, occasionally three, evening meetups per week in London. Women used to be uncommon at technical meetups. This year, groups of 2–4 women have become common in meetings of 20+ people (perhaps 30% of attendees); men usually arrive individually. Almost all women I talked to were (ex) students looking for a job; this was also true of the younger (early 20s) men I spoke to. I don’t know if attending meetups been added to the list of things to do to try and find a job.

Tom Plum passed away at the start of the year. Tom was a softly spoken gentleman whose company, PlumHall, sold a C, and then C++, compiler validation suite. Tom lived on Hawaii, and the C/C++ Standard committees were always happy to accept his invitation to host an ISO meeting. The assets of PlumHall have been acquired by Solid Sands.

Perennial was the other major provider of C/C++ validation suites. It’s owner, Barry Headquist, is now enjoying his retirement in Florida.

The evidence-based software engineering Discord channel continues to tick over (invitation), with sporadic interesting exchanges.

What did I learn/discover about software engineering this year?

Software reliability research is a bigger mess than I had previously thought.

I now regularly use LLMs to find mathematical solutions to my experimental models of software engineering processes. Most go nowhere, but a few look like they have potential (here and here and here).

Analysis/data in the following blog posts, from the last 12-months, belongs in my book Evidence-Based Software Engineering, in some form or other (2025 was a bumper year):

Naming convergence in a network of pairwise interactions

Lifetime of coding mistakes in the Linux kernel

Decline in downloads of once popular packages

Distribution of method chains in Java and Python

Modeling the distribution of method sizes

Distribution of integer literals in text/speech and source code

Percentage of methods containing no reported faults

Half-life of Open source research software projects

Positive and negative descriptions of numeric data

Impact of developer uncertainty on estimating probabilities

After 55.5 years the Fortran Specialist Group has a new home

When task time measurements are not reported by developers

Evolution has selected humans to prefer adding new features

One code path dominates method execution

Software_Engineering_Practices = Morals+Theology

Long term growth of programming language use

Deciding whether a conclusion is possible or necessary

CPU power consumption and bit-similarity of input

Procedure nesting a once common idiom

Functions reduce the need to remember lots of variables

Remotivating data analysed for another purpose

Half-life of Microsoft products is 7 years

How has the price of a computer changed over time?

Deep dive looking for good enough reliability models

Apollo guidance computer software development process

Example of an initial analysis of some new NASA data

Extracting information from duplicate fault reports

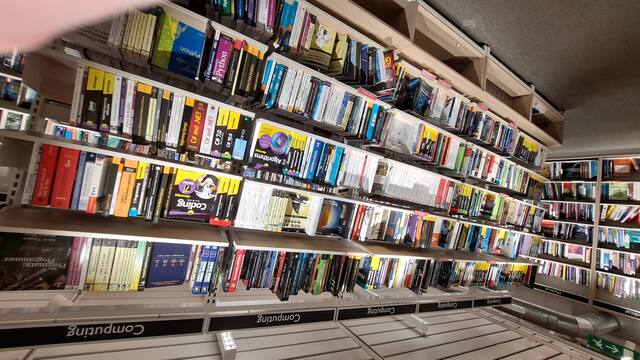

I visited Foyles bookshop on Charing cross road during the week (if you’re ever in London, browsing books in Foyles is a great way to spend an afternoon).

Computer books once occupied almost half a floor, but is now down to five book cases (opposite is statistics occupying one book case, and the rest of mathematics in another bookcase):

Around the corner, Gender Studies and LGBTQ+ occupies seven bookcases (the same as last year, as I recall):

Programming Punched card machines

Punched card machines, or Tabulating machines, or Unit Record equipment, or according to a 1931 article Super Computing machines, were electromechanical devices that summarised information contained on punched cards (aka tabulating cards). These machines date from 1884, with the publication of Herman Hollerith’s patent application 18840923. In 1948 the electronic valve based IBM 603 calculating punch machine was launched.

The image below (from Wikipedia) shows an IBM 80 column card. When introduced in 1928, the card contained 10 rows, with rows 11 and 12 (known as zone punching positions) added later to support non-digit characters. The paper: “Do Not Fold, Spindle or Mutilate”: A Cultural History of the Punch Card takes a wry look at the social impact of these cards.

Manufacturers sold a range of single purpose Punch machines. Single purposes included: sorting cards, duplicating cards with specified changes to column contents, printing card contents, and simple accounting (adding/subtracting values).

Yes, Punched card machines can be programmed. The vast majority of machines were used by businesses for accounting and stock control, but since the early 1930s a few were used by researchers for scientific computations.

A Punch machine program consisted of cables that directed the flow of electrical signals from one to eighty output sockets (one for each of the 80 columns on a punched card), through various control/manipulation subsystems, to produce an output, e.g., printing a cheque, an itemised invoice, or creating an updated card. The input/output sockets (the terminology of the day was entry/exit sockets) for each subsystem were arranged on the machine’s Control panel (more commonly known as a plugboard).

Each plugboard contains a row of reader output sockets, one for each of the 80 card columns, a row of input sockets that connected to a printing mechanism, and sockets providing input/output for other operations. For example, a connection from, say, the 50’th column of the reader output socket to the 70’th column of the print input socket would print the contents of the 50’th column of the card in the 70’th column of the paper/card output.

The image below (from Wikipedia) shows connections for a program on an IBM 402:

Like many early computers, Punch machine architecture is bit-serial. That is, values are represented as a constant-duration sequence of bits (with the 12 rows of a column forming a card cycle), rather than a parallel sequence of bits (e.g., a byte) all appearing at the same time. The duration of the sequence is driven by the card reader, which moves the card (bottom to top) across a row of metal brushes (one for each column), completing an electric circuit (generating a pulse) when the brush makes contact through a hole in the card.

Once a card cycle completes, the bit sequences are gone (some machines could store a few column values). Some Punch machines read the same card multiple times (three is the maximum I have seen). Multiple readings make it possible to use input from the first/second reading to select the operations to be performed on the input during subsequent readings.

An example of the need for multiple reads. Holes in the zone punching positions may specify an alphabetic character or a special data specific meaning, e.g., accounting records could indicate that a column value is a credit rather than a debit by punching a hole in the 11’th row of the appropriate column. To maintain backwards compatibility, the zone punching positions appear near the top of the punch card, and are read last, leaving the digits pulses unchanged. If a zone punching position has a special meaning, the first read is used to detect whether, say, the 11’th row contains a hole, and the digit value is obtained on the second read (see X Elimination on page 126).

Punch machine programs did not support loops. Loops are implemented by including a human in the chain of execution. The body of the loop performs a calculation on the input cards, writing the results to new cards. These new cards are moved to the input hopper, and the program run again, iterating until the desired accuracy is obtained (or not).

Naming convergence in a network of pairwise interactions

While naming and categorizing things are perhaps the two most contentious issues in software engineering, there is often a great deal of similarity in the names and categorizes used by unconnected groups. These characteristics of naming and categorization are general observed behaviors across cultures and languages, with software engineering being a particular example.

Studies have found that a particular name for a thing is likely to become adopted by a group, if around 25% of its members actively promote the use of the name. The terms tipping point and critical mass have been applied to the 25% quantity.

What factors could cause 25% of the members of a group to select a particular name, and why does a tipping point occur at around this percentage?

The paper Experimental evidence for scale-induced category convergence across populations by Douglas Guilbeault (PhD thesis behind the paper), Andrea Baronchelli, and Damon Centola experimentally investigated factors that can cause a name to be adopted by 25% of a group’s members, and the researchers proposed a model that exhibits behavior similar to the experimental results (the supplement contains the technical details).

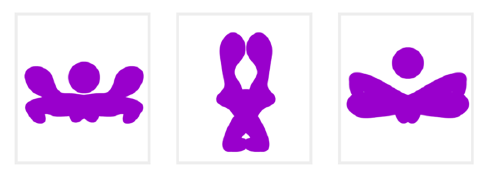

The experiment asked subjects to play the “Grouping Game”. The 1,480 online subjects were divided into networks containing either 2, 6, 8, 24 or 50 members. The interaction between members of a network only occurred via randomly selected pairs (the same pair for the network of two), with one person designated as the speaker and the other as the hearer. A pair saw three randomly selected images, such as the one below. For the speaker only, one of the images was highlighted, and they had to give a name containing at most six characters to the image. The hearer saw the name given by the speaker to one of the images, and had 30 seconds to choose the image they considered to have been named. If the image selected by the hearer was the one named by the speaker, both received a small payment, otherwise an even smaller amount was deducted from their final payment. Each subject played 100 rounds with the randomly chosen members of their network.

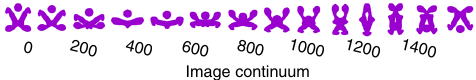

The images were created as a series of 50+ distinct patterns whose shape slowly morphed along a continuum, as in the following image:

The experimental results were that larger networks converged to a consistent, within group, naming of the images (using a few names), while smaller groups rarely converged and used many different names. The researchers proposed that as the network size grew, common names were encountered more often than rarer names, increasing the likelihood of reaching a tipping point. This behavior is similar to the birthday paradox, where there is a 50% probability that in a room of 23 people, two people will share the same birthday.

In the experiment, some networks included confederates trained to use a small subset of names, i.e., the researchers created a common set of names. It was hypothesized built-in human preferences would produce common patterns in the real world that, for larger groups, would cause tipping points to occur, amplifying the more common patterns to become group norms.

The supplement to the paper develops a theoretical model based on the probability of  identical items being contained in a sample of

identical items being contained in a sample of  items, when sampling without replacement. The solution involves the hypergeometric distribution, which is difficult to deal with analytically, so simulation is needed. The results show a tipping point at around 25%.

items, when sampling without replacement. The solution involves the hypergeometric distribution, which is difficult to deal with analytically, so simulation is needed. The results show a tipping point at around 25%.

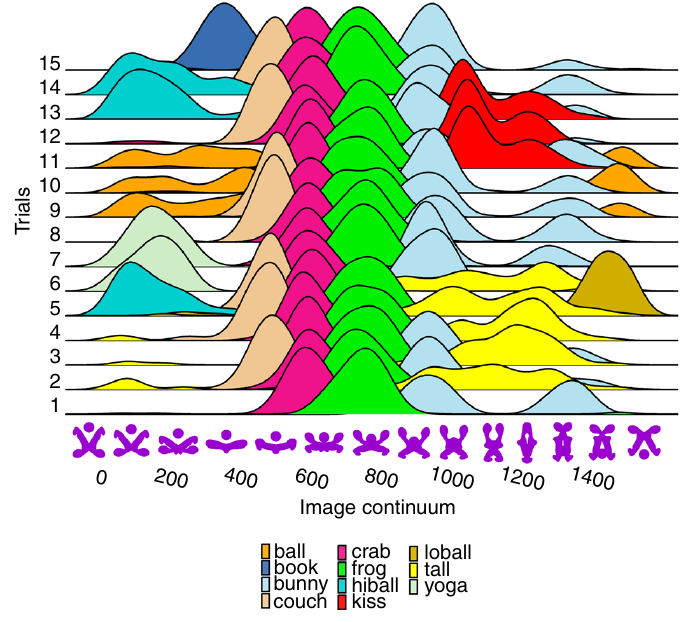

The plot below shows a density plot for one 50-subject network over 15 trials (after 100 rounds of pairwise interaction), with each color denoting one of the 14 chosen names (height of the curve denotes likelihood of the same name being chosen for that image; code and data):

This plot shows that the same name is often used across trials, and naming boundaries between some images.

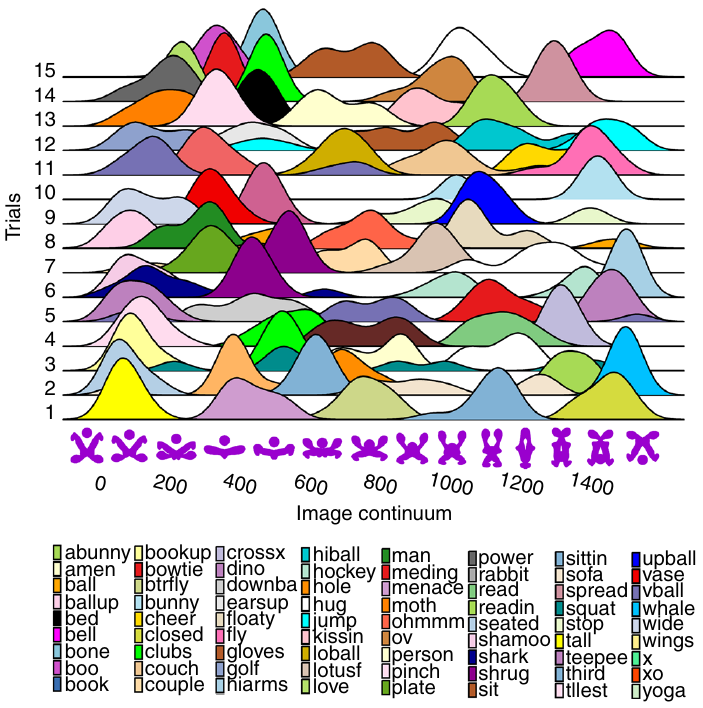

The plot below shows a density plot for one 2-subject network over 15 trials (after 100 rounds of pairwise interaction), with each color denoting one of the 72 chosen names (height of the curve denotes likelihood of the same name being chosen for that image; code and data):

Here there is no consistent naming across trials, a much greater diversity of names appearing, and no obvious naming boundaries between images.

Christmas books for 2025

My rate of book reading has remained steady this year, however, my ability to buy really interesting books has declined. Consequently, the list of honourable mentions is longer than the main list. Hopefully my luck/skill will improve next year. As is usually the case, most book were not published in this year.

Liberal Fascism: The secret history of the Left from Mussolini to the Politics of Meaning by Jonah Goldberg is reviewed in a separate post.

Oxygen: The molecule that made the world by Nick Lane, a professor of evolutionary biochemistry, published in 2016. The book discusses changes in the percentage of oxygen in the Earth’s atmosphere over billions of years and the factors that are thought to have driven these changes. The content is at the technical end of popular science writing. The author is a strong proponent that life (which over a billion or so years produced most of the oxygen in the atmosphere) originated in hydrothermal vents, not via lightening storms in the Earth’s primordial atmosphere (as suggested by the Miller–Urey experiment). The Wikipedia article on the origins of life contains a lot more words on the Miller–Urey experiment.

“By the Numbers: Numeracy, Religion and the Quantitative Transformation of Early Modern England” by Jessica Marie Otis, a professor of history, published in 2024. Here, early modern England starts around 1543 with the publication of an arithmetic textbook, The Ground of Artes, that was republished 45 times up until 1700. As the title suggests, the book discusses the factors driving the spread of numeracy into the general population, e.g., the need for traders and organizations to keep accounts, and the people to keep track of time. For the general reader, the book is rather short at 160 readable pages. Historians get to enjoy the 51 pages of notes and 37 pages of bibliography.

For insightful long, discursive book reviews that are often more interesting than the books themselves (based on those I have purchased), see: Mr. and Mrs. Psmith’s Bookshelf. This year, Astral Codex ran a Non-Book Review Contest.

The blog Worshipping the Future by Helen Dale and Lorenzo Warby continues to be an excellent read. It is “… a series of essays dissecting the social mechanisms that have led to the strange and disorienting times in which we live.” The series is a well written analysis that attempts to “… understand mechanisms of how and the why, …” of Woke.

As an aside, one of the few pop cds I bought this year turned out to be excellent: “PARANOÏA, ANGELS, TRUE LOVE” by Christine and the Queens.

Honourable mentions

The Knowledge: How to Rebuild Our World from Scratch by Lewis Dartnell, an astrobiologist. Assuming you are among the approximately 5% of people still alive after civilizations collapses (the book does not talk about this, but without industrial scale production of food, most people will starve to death), how can useful modern day items (i.e., available in the last hundred years or so) be created? Items include ammonia-based fertilizer, electricity, radio receiver and simple drugs. The processes sound a lot easier to do than they are likely to be in practice (manufacturing processes invariably make use of a lot of tacit knowledge), but then it is a popular book covering a lot of ground. It’s really a list of items to consider, along with some starting ideas.

“Goodbye, Eastern Europe: An Intimate History of a Divided Land” by Jacob Mikanowski, a historian and science writer, published in 2023. A history of Eastern Europe from the first century to today, covering the countries encircled by Germany, the Baltic Sea, Russia, and the Black Sea/Mediterranean. The story is essentially one of migrations, and mass slaughters, with the accompanying creation and destruction of cultures. Harrowing in places. It’s no wonder that the people from that part of the world cling to whatever roots they have.

“Reframe Your Brain: The User Interface for Happiness and Success” by Scott Adams of Dilbert fame, published in 2023. To quote Wikipedia: “Cognitive reframing is a psychological technique that consists of identifying and then changing the way situations, experiences, events, ideas and emotions are viewed.” This book contains around 200 reframes of every day situations/events/emotions, with accompanying discussion. Some struck me as a bit outlandish, but sometimes outlandish has the desired effect.

Details on your best books of the year very welcome in the comments.

Recent Comments