Archive

Data+code for book: The New C Standard

All the data+code from my book The New C Standard: An Economic and Cultural Commentary is now available on GitHub. For many years I have been meaning to create an easy way to map from a graph/table in the book to the file containing the data, which has blocked me adding the data to GitHub. I have unblocked by releasing this minimal viable product, i.e., it is essentially a copy of the usage subdirectory in the book’s directory.

While the five stage process to get from graph/table to data is tedious, at least there is a process that provides the data. The caption of the graphs in my Evidence-based Software Engineering book contain a link to the corresponding file on GitHub. This was not possible for the C book because GitHub was still 3-years in the future when the book was published (in 2005).

Work on the book started in late 1999 and measurements of C usage was an integral component. Publicly available source code was still a novelty and large Open source projects were rare (SourceForge was launched at the end of 1999). The large projects with C source available to measure were: Linux, Netscape, Gcc, PostgresSQL, OpenAFS, and OpenMotif. Several popular projects originally written in C had migrated to using C++, and were therefore not applicable.

As the book was completed in 2005, evidence-based software engineering restarted, 20-years after the fall of Rome. Or rather, I have nominated 2005 as the year this happened. Feel free to quibble plus/minus a few years.

Search engines were an essential tool for obtaining research papers, reports, and occasionally downloading data. In 2000 the search engine of choice was AltaVista, but a few years later Google had become the best.

While writing the book, I was a regular visitor to bricks and mortar buildings called libraries. Back then, university libraries contained tens of thousands of physical books, and researchers would photocopy papers of interest. Little did I know that this research practice would soon be dead.

In 2005, I had this to say about software evolution:

Measuring the characteristics of software that change over many releases (software evolution) is a relatively new research topic. Software evolution is discussed in a few sentences, and any future major revision ought to cover this important topic in substantially more detail. |

How might C source code characteristics have changed in the last 20 years?

- The use of K&R style function definitions is probably very rare; it was well on the way out in 1999,

- big software systems have gotten bigger, i.e., more lines of code and more

#includes, - A lot more code using 32-bit integers and 64-bit pointers,

- More storage allocated (memory capacity has increased) because it’s faster to do everything in memory, and there is more data.

Distribution of integer literals in text/speech and source code

Numeric values are an integral to communication between people. What is the distribution of integer values in text/speech, and does the use of integer literals in source code have a similar distribution?

- The paper Numbers in Context: Cardinals, Ordinals, and Nominals in American English by Greg Woodin, and Bodo Winter studied the 9+ million numbers contained in the Corpus of Contemporary American English (7,744,038 integer values). The plot below shows the number of occurrences of the smaller integer values, and a fitted regression line for values in the range 1..50 (code+data):

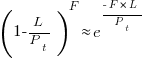

The frequency of integer values in this corpus is proportional to:

.

. - The paper Frequency of occurrence of numbers in the World Wide Web by Dorogovtsev, Mendes, and Gama Oliveira found that the number of web pages containing a given integer value declines as the value increases, with the decline for non-round numbers being roughly proportional to

(round numbers are much more frequent than adjacent values and bias fitted models), and including all values gives

(round numbers are much more frequent than adjacent values and bias fitted models), and including all values gives  (for values up to

(for values up to  ).

).

Programs are an implementation of a sliver of the world in which people live, and it is to be expected that the frequency of numeric literal values in source code is highly correlated with real world frequency. Numeric values also appear in the algorithms and mathematical expressions used to create implementations. I am not aware of any studies looking at the frequency of use of numeric constants in algorithms and mathematics. As an aside, the frequency of occurrence of mathematical expressions containing a given number of operators is similar to that in C source

What are the usage characteristics of integer literals in source code (floating-point literal use is very rare outside of particular application domains)?

The plot below shows occurrences of decimal (green) and hexadecimal (blue) literals in C source (data from fig 825.1 from my C book) with a regression line fitted to values 1..50 of the decimal data (code+data):

The frequency of decimal literal values in C source is proportional to:  . Adding the hexadecimal values to the model has little effect.

. Adding the hexadecimal values to the model has little effect.

The paper What do developers consider magic literals? A smalltalk perspective by Anquetil, Delplanque, Ducasse, Zaitsev, Fuhrman, and Guéhéneuc studied the use of literals in Smalltalk. The plot below shows the number of occurrences of all kinds of integer literals and a fitted regression line (code+data):

The frequency of integer literal values in Smalltalk source is proportional to:  .

.

The distribution of integer literals in both human communication and source code is well-fitted by a power law. Smalltalk appears to be the outlier, with an exponent of 1.7 vs 1.3-1.4. Perhaps it’s a sample size issue; 14,054 integer literals for Smalltalk and a million+ for the other datasets.

I had expected source code to contain a lot more zeroes/ones, relative to other values, than human communication. Zero/one are such common values that there are implicit short-cuts that people can use to express them; removing the effort/cost needed to explicitly specify them. Some programming languages specify default 0/1 values for common idioms, but C-like languages generally require explicit specification of values.

ISO C++ committee has a new chief sheep herder

The ISO C++ Standards committee, WG21, has a new convenor, Guy Davidson, or rather they will have when the term of the current convenor, Herb Sutter, expires at the end of this year.

Apart from the few people directly involved, this appointment does not matter to anybody (sorry Guy). The WG21 juggernaut will continue on its hedonistic way, irrespective of who is currently the chief sheep herder.

Before discussing the evolution of language standards, a brief summary of the unusual points around this appointment:

- More than one person volunteered for the job (several in the US, who selected Jeff Garland, and one in the UK; everyone agreed that both were capable candidates). The announcement by a programming language convenor that they are not standing again when their 3-year term expires more commonly kicks off discrete discussions about whose arm can be twisted to take on the role. It’s a thankless task that consumes time and money (to attend extra meetings). Also, the convenor has to be neutral, which circumscribes being involved in technical discussion.

Sometimes an outsider pops up, ruffles a few feathers and then disappears (from the Standards’ world).

- One of the SC22 (the ISO committee responsible for programming languages) convenor selection rules says (see Resolution 14-04): “When a WG Convenorship becomes vacant, … and multiple NBs have each nominated a candidate, the Convenorship shall be assigned to the candidate whose NB currently has the fewest SC 22 Convenors.” Currently, the US holds multiple convenorships and the UK holds none, so the UK nominee is appointed.

As often happens, people like diversity rules until they lose out. The US submitted a selection procedural change to SC22, and asked that it take effect before the selection of a new WG21 convenor. The overwhelming consensus at the SC22 plenary last Monday was not to change the rules while an election was in progress. An ad-hoc committee was set up to consider changes to the current rules.

End of the news and back to regular postings.

Standards committees for programming languages are now a vestige from a bygone era. The original purpose of standards was to reduce costs (the UK focused on savings achieved through repeated use of standardized items and the US focused on reduced training costs) by having companies manufacture products that conformed to a single specification.

There were once a multitude of implementations for the commercially important languages, each supporting slightly different dialects (the differences were sometimes not so slight). Language standards provided a base specification for developers interested in portable code to keep within, and that vendors could be pressured to support.

The spread of Open source compilers significantly reduced the need for companies to invest in maintaining their own compiler (there might be strategic reasons for companies selling hardware or operating systems to continue to invest in their own compiler), and reduced the likelihood that customers of commercial compiler companies would continue to pay for updates (effectively driving most compiler companies out of business).

Language standards are redundant in a monoculture, i.e., where only one compiler per language is widely used. For some years now, there have been a handful of actively maintained compilers for the widely used languages.

These days, conformance to a language standard is measured by the ability of an implementation to compile and execute the Open source software available in the various ecosystems.

As has often been observed, committees find work to keep themselves busy, and I have seen announcements for new ISO committees that look like they were created because somebody saw a CV padding opportunity.

I continue to think that the C++ committee has become a playground for bored consultants looking for a creative outlet.

WG21 meeting attendance continues to grow, now attracting 200+ attendees (Grok undercounts, e.g., 140 vs 215, and ChatGPT 5 is completely out of its depth). This is an order of magnitude greater than the C committee, WG14, and in a few years could be two orders of magnitude greater than the other SC22 languages.

The two major C/C++ compiler vendors (i.e., gcc and llvm) could simply go their own way, with regard to new language features. However, I imagine that “supporting the latest version of the language standard” is a great rationale to use when asking for funding.

How large can WG21 become before it collapses under the weight of members and the papers they write?

The POSIX standard, WG15, meetings often had 200-300 attendees in the late 1980s/early 1990s. But the POSIX committee stuck to its goal of specifying existing practice, and so has faded away.

Guy strikes me as an efficient administrator. Which is probably bad news, in the sense that this could enable WG21 to grow a lot larger. What ever happens, it will be interesting to watch.

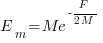

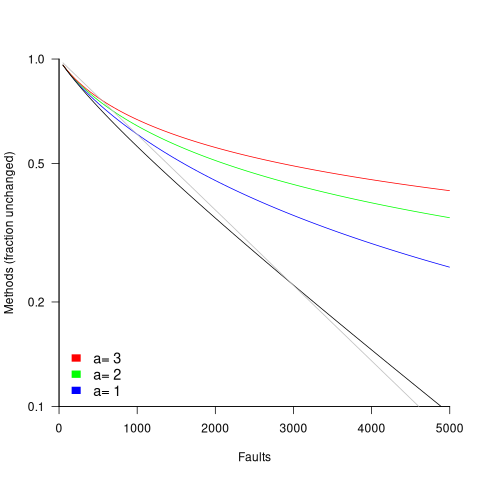

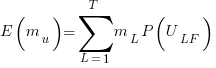

in lines of code, of the method. The evidence shows that the

in lines of code, of the method. The evidence shows that the  ).

). reported faults have been fixed in a program containing

reported faults have been fixed in a program containing  methods/functions, what is the expected number of methods that have not been modified by the fixing process?

methods/functions, what is the expected number of methods that have not been modified by the fixing process?

is the

is the  is the

is the  for Java.

for Java. (

(

, where

, where  is the number of previously detected coding mistakes in the method.

is the number of previously detected coding mistakes in the method.

is the average value of

is the average value of  over all

over all  , then

, then  for a power law with exponent 2.35).

for a power law with exponent 2.35).

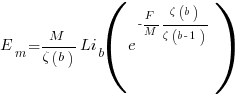

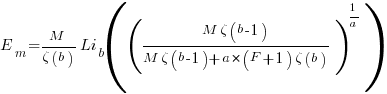

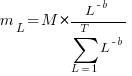

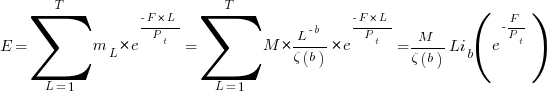

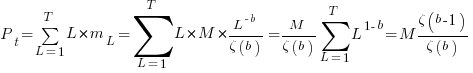

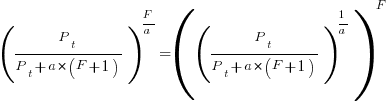

, is:

, is: , where

, where  is the length of the longest method,

is the length of the longest method,  is the number of methods of length

is the number of methods of length  is the probability that a method of length

is the probability that a method of length  , where

, where  , then the sum can be approximated by the

, then the sum can be approximated by the

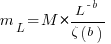

, where

, where  is the total lines of code in the program, and the probability of this method not being modified after

is the total lines of code in the program, and the probability of this method not being modified after

the average value of

the average value of  is the

is the  we get:

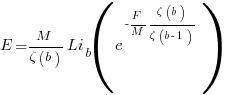

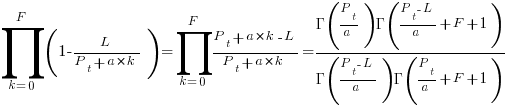

we get:

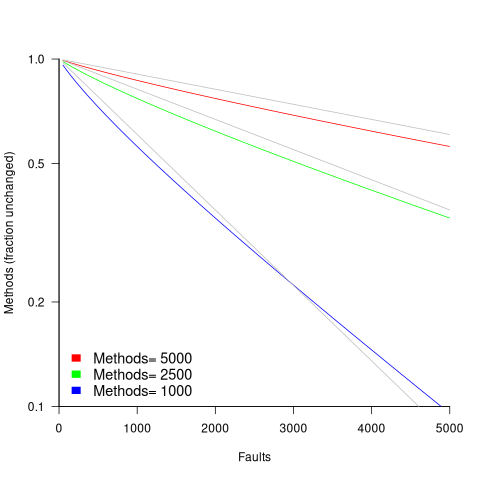

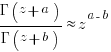

is the preferential attachment version of the expression

is the preferential attachment version of the expression  appearing in the simple model derivation. Using this preferential attachment expression in the analysis of the simple model, we get:

appearing in the simple model derivation. Using this preferential attachment expression in the analysis of the simple model, we get:

Recent Comments