Archive

Public documents/data on the internet sometimes disappears

People are often surprised when I tell them that documents/data regularly disappears from the internet. By disappear I mean: no links to websites hosting the data are returned by popular search engines, nothing on the Wayback Machine (including archive.org, which now has a Scholar page), and nothing on LLM suggested locations.

There is the drip-drip-drip of universities deleting the webpages they host of academics who leave the university (MIT is one of the few exceptions). Researchers often provide freely downloadable copies of their own papers via these pages, which may be the only free access (research papers are generally available behind a paywall). It’s great that the ACM has gone fully Open Access

Datasets that were once publicly available on government or corporate sites sometimes just disappear. Two ‘missing’ datasets I have written about are DACS dataset and Linux Counter data. This week, I found out that the detailed processor price lists that Intel used to publish are now disappeared from the web (one site hosts a dozen or so price lists; please let me know if you have any of these price lists).

I have lots of experience asking researchers for a copy of the data analysed in a paper they wrote, to be told something along the lines of “It’s on my old laptop”, i.e., disappeared.

It is to be expected that data from pre-digital times will only sometimes be available online. My interest in tracking the growth of digital storage has led to a search for details of annual sales of punched cards. I’m hoping that a GitHub repo of known data will attract more data.

The sites where researchers host the data analysed in their papers include (ordered in roughly the frequency I encounter them): GitHub, personal page, Zenodo, Figshare and the Center for Open Science.

Some journals offer a data hosting option for published papers. Access to this data can be problematic (e.g., agreeing to an overly restrictive license), or the link to the data might dead (one author I contacted was very irate that the journal was not hosting the data he had carefully curated, after they had assured him they were hosting it).

Research papers are connected by a web of citations. Being able to quickly find cited/citing papers makes it possible to do a much more thorough search of related work, compared to traditional manual methods. When it launched in 1997, CiteSeer was a revelation (it probably doubled the citations in my C book). Many non-computing papers could still only be found in university libraries, but by 2013 I no longer bothered visiting university libraries. ResearchGate launched in 2008, and in 2010 Semantic Scholar arrived. Unfortunately, the functionality of both CiteseerX and Semantic Scholar is a shadow of its former glory. ResearchGate continues to plod along, and Google Scholar has slowly gotten better and better, to become my paper search site of choice.

Keep a copy of your public documents/data on an Open access repository (e.g., GitHub, Zenodo, Figshare, etc). By all means make a copy available on your personal pages, but remember that these are likely to disappear.

How did Agile become the product development zeitgeist?

From the earliest days of computing, people/groups have proposed software development techniques, and claiming them to be effective/productive ways of building software systems. Agile escaped this well of widely unknowns to become the dominant umbrella term for a variety of widely used software development methodologies (I’m talking about the term Agile, not any of the multitude of techniques claiming to be the true Agile way). How did this happen?

The Agile Manifesto was published in 2001, just as commercial use of the Internet was going through its exponential growth phase.

During the creation of a new market, as the Internet then was, there are no established companies filling the various product niches; being first to market provides an opportunity for a company to capture and maintain a dominate market share. Having a minimal viable product, for customers to use today, is critical.

In a fast-growing market, product functionality is likely to be fluid until good enough practices are figure out, i.e., there is a lack of established products whose functionality new entrants need to match or exceed.

The Agile Manifesto’s principles of early, continuous delivery, and welcoming of changing requirements are great strategic advice for building products in a new fast-growing market.

Now, I’m not saying that the early Internet based companies were following a heavy process driven approach, discovered Agile and switched to this new technique. No.

I’m claiming that the early Internet based companies were releasing whatever they had, with a few attracting enough customers to fund further product development. Based on customer feedback, or not, support was added for what were thought to be useful new features. If the new features kept/attracted customers, the evolution of the product could continue. Did these companies describe their development process as throw it at the wall and see what sticks? Claiming to be following sound practices, such as doing Agile, enables a company to appear to be in control of what they are doing.

The Internet did more than just provide a new market, it also provided a mechanism for near instantaneous zero cost product updates. The time/cost of burning thousands of CDs and shipping them to customers made continuous updates unrealistic, pre-Internet. Low volume shipments used to be made to important customers (when developing a code generator for a new computer, I sometimes used to receive OS updates on a tape, via the post-office).

The Agile zeitgeist comes from its association with many, mostly Internet related, successful software projects.

While an Agile process works well in some environments (e.g., when the development company can decide to update the software, because they run the servers), it can be problematic in others.

Agile processes are dependent on customer feedback, and making updates available via the Internet does not guarantee that customers will always install the latest version. Building software systems under contract, using an Agile process, only stands a chance of reaping any benefits when the customer is a partner in the same process, e.g., not using a Waterfall approach like the customer did in the Surrey police SIREN project.

Agile was in the right place at the right time.

Source code will soon need to be radiation hardened

I think I have discovered a new kind of program testing that may soon need to be performed by anybody wanting to create ultra-reliable software.

A previous post discussed the compiler related work being done to reduce the probability that a random bit-flip in the memory used by an executing program will result in a change in behavior. At the moment 4G of ram is expected to experience 1 bit-flip every 33 hours due to cosmic rays and the rate of occurrence is likely to increase.

Random corrupts on communications links are protected by various kinds of CRC checks. But these checks don’t catch every corruption, some get through.

Research by Artem Dinaburg looked for, and found, occurrences of bit-flips in domain names appearing within HTTP requests, e.g., a page from the domain ikamai.net being requested rather than from akamai.net. A subsequent analysis of DNS queries to VERISIGN’S name servers found “… that bit-level errors in the network are relatively rare and occur at an expected rate.” (the  bit error rate was thought to occur inside routers and switches).

bit error rate was thought to occur inside routers and switches).

Javascript is the web scripting language supported by all the major web browsers and the source code of JavaScript programs is transmitted, along with the HTML, for requested web pages. The amount of JavaScript source can dwarf the amount of HTML in a web page; measurements from four years ago show users of Facebook, Google maps and gmail receiving 2M bytes of Javascript source when visiting those sites.

If all the checksums involved in TCP/IP transmission are enabled the theoretical error rate is 1 in  bits. Which for 1 billion users visiting Facebook on average once per day and downloading 2M of Javascript per visit is an expected bit flip rate of once every 5 days somewhere in the world; not really something to worry about.

bits. Which for 1 billion users visiting Facebook on average once per day and downloading 2M of Javascript per visit is an expected bit flip rate of once every 5 days somewhere in the world; not really something to worry about.

There is plenty of evidence that the actual error rate is much higher (because, for instance, some checksums are not always enabled; see papers linked to above). How much worse does the error rate have to get before developers need to start checking that a single bit-flip to the source of their Javascript program does not result in something nasty happening?

What we really need is a way of automatically radiation hardening source code.

Amount of end-user usage of code in Firefox

How much end-user usage does the code in Firefox receive over time?

Short answer: The available data is very sparse and lots of hand waving is needed to concoct something.

The longer answer is below as another draft section from my book Empirical software engineering with R. As always, comments and pointers to more data welcome. R code and data here.

Suggestions for alternative methods of calculation also welcome.

Amount of end-user usage of code in Firefox

Source code that is never executed will not have any faults reported against it while code that is very frequently executed is more likely to have a fault reported against it than less frequently executed code.

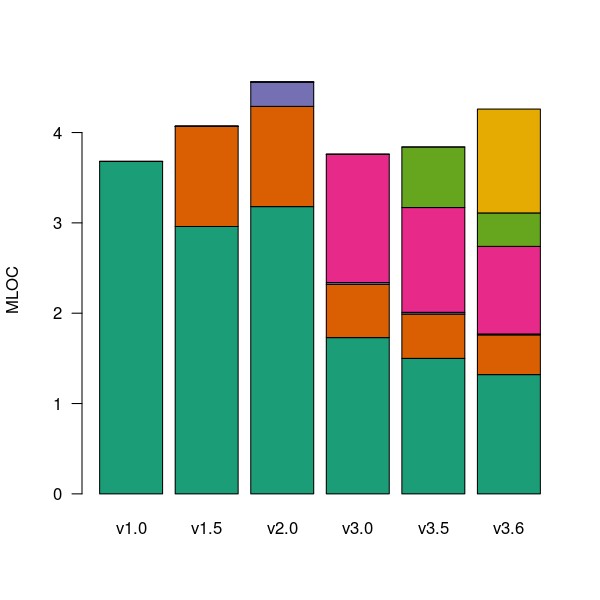

The Firefox browser has been the subject of several fault related studies. The study by Massacci, Neuhaus and Nguyen is of interest here because it provides the information needed to attempt to build a fault model that takes account of the total amount of usage that code experiences from all end-users of a program. The data used by the study applies to 899 Mozilla Firefox-related Security Advisories (MFSA, a particular kind of fault), noting the earliest and latest versions of Firefox that exhibits each fault; six major releases (i.e., versions 1.0, 1.5, 2.0, 3.0, 3.5 and 3.6) were analysed; the amount of code in each version that originated in earlier versions was measured (see plot below).

Massacci et al make their raw data available under an agreement that does not permit your author to directly distribute it to readers;; the raw data for the following analysis was reverse engineered from the Massacci et al paper; or obtained from other sources.

The following analysis is an attempt to build a model of amount of Firefox code usage, by end-users, over time, i.e., number of lines of Firefox source code being executed per unit time summed over all end-users at a given moment in time. The intent is to couple this model with fault data, looking for a relationship of the form: an X% change in usage results in a Y% change in reported faults.

Figure 1. Amount of source (millions of lines) in each version, broken down by the version in which it first appears. Data from Massacci, Neuhaus and Nguyen <book Massacci_11>.

As expected, a large amount of code from previous versions appears in later versions.

Since we are interested in the relationship between end-user code usage and faults (MFSAs in this case) we are only interested in versions of Firefox that are actively maintained by Mozilla. Every version has a first official release date and an end-of-support date beyond which no faults reported against it are fixed; any usage of a version after the end-of-support date is not of interest in this analysis.

How many people are using each version of Firefox at any time?

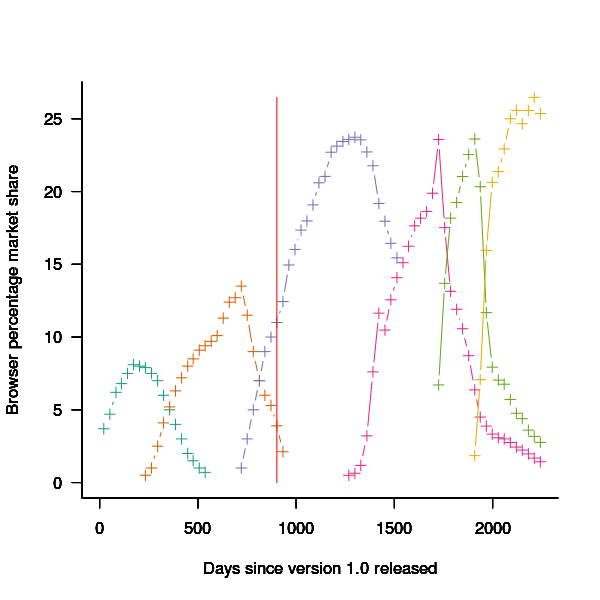

A number of websites list information on Firefox market share over time (as a percentage of all browsers measured), but only two known to your author break this information down by Firefox version. Massacci et al used url[netmarketshare.com] for Firefox version market share (data going back to November 2007), but your author found it easier to obtain information from url[www.w3schools.com] (data going back to May 2007). The W3schools data is obtained from the log of visitors to their site, which will obviously be subject to fluctuations (of unknown magnitude).

For the period November 2004 to April 2007 the market share of each Firefox version was estimated as follows:

- total Firefox market share was based on that listed by url[marketshare.hitslink.com]

- during the period when only version 1.0 was available, its market share was assumed to be the total Firefox market share,

- the market share for versions 1.5 and 2.0 was assumed to follow the trend of growth and decline seen in later releases for which data is available. Numbers were concocted that followed the version trend and summed to the known total market share.

The plot below shows the market share of the six versions of Firefox between official release and end-of-support. Estimated values appear to the left of the vertical red line, values from measurements to the right. It can be seen that at its end-of-support date version 2.0 still had a significant market share.

Figure 2. Market share of Firefox versions between official release and end-of-support. Data from url[www.w3schools.com].

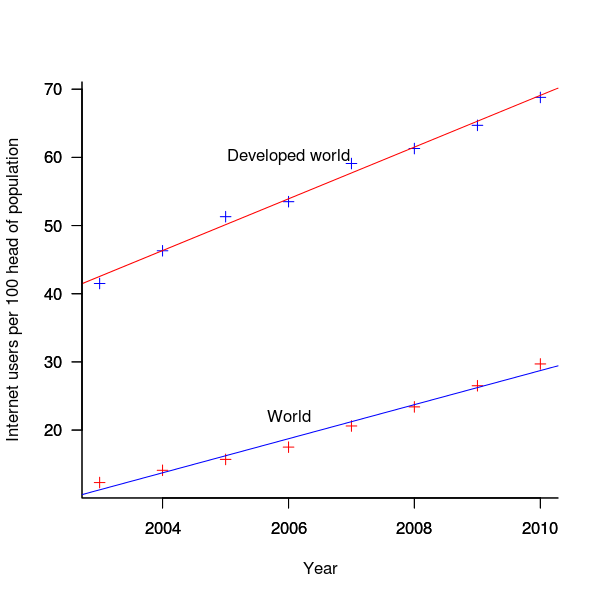

The International Telecommunications Union publishers an estimate of the number of people per 100 head of population with Internet access for each year between 2003 and 2011 <book ITU_12>; the data is broken down by developed/developing countries and also by major world regions. Assuming that everybody who users the Internet uses a browser, this information can be combined with market share and human population data to estimate the number of Firefox users.

The ITU do not provide much information about how the usage figure is calculated or even which month of the year it applies to (since we are interested in change over time, knowing the month is not important and the start of the year is assumed). As the figure below shows the estimate over the period of interest can be accurately modeled by a straight line. A linear model was fitted to the data to predict usage between published estimates; over the period of interest the rate of growth in the Developed world has been almost twice as great as the rate in the whole world.

Figure 3. Number of people with Internet access per 100 head of population in the developed world and the whole world. Data from ITU <book <ITU_12>.

We are interested in relative change in total user population, and this can be obtained by multiplying the per-head of population value by the change in population (a 0.8% yearly growth is assumed for the developed world).

Possible significant factors for why the formula  might not accurately reflect the probability of a MFSA being reported include:

might not accurately reflect the probability of a MFSA being reported include:

- the characteristics of people who started using the Internet in 2004 may be different from those who first started in 2010:

- there will be variation in the amount of time people spend browsing, does the distribution of time usage differ between early and late adopters?

- some people are more likely than others to report a fault (e.g., my mum is a late adopter and extremely unlikely to report a fault, whereas I might report a fault),

- there may be significant regional differences, e.g., European users vs. Chinese users. These differences include the Internet sites visited (the behavior of Firefox will depend on the content of the web page visited) and may affect their propensity to report a problem (e.g., do the cultural stereotypes of Chinese acceptance of authority mean they are unlikely to report a fault while those noisy Americans complain about everything?)

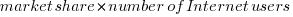

The end-user usage for code originally written for a particular version, at a point in time, is calculated as follows:

- number of lines of code originally written for a particular version that is contained within the code used to build a later version, or that particular version; call this the build version,

- times the market share of the build version,

- times the number of Internet users of the build version (users in the Developed world was used).

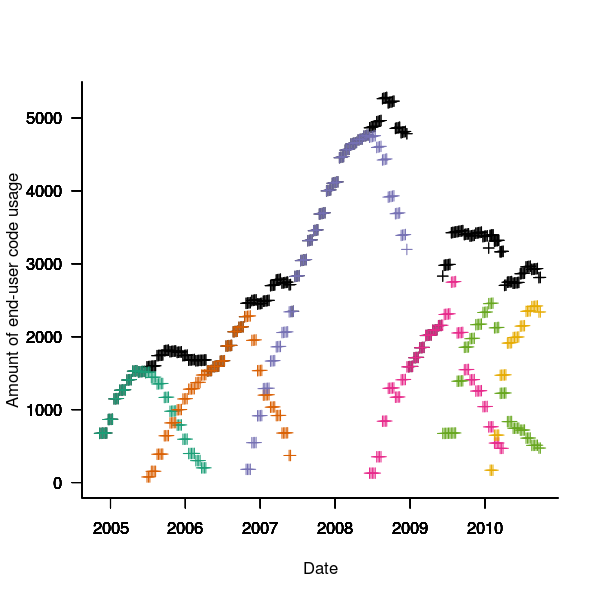

The plot below is an example using the source code originally written for Firefox version 1.0. The green points are the code usage for version 1.0 code executing in Firefox build version 1.0, the orange points the code usage for version 1.0 code executing in build version 1.5 and so on to the yellow points which is the code usage for version 1.0 code executing in build version 3.6. The black points are the sum over all build versions.

Figure 4. Amount of end-user usage of code originally written for Firefox version 1.0 by various other versions.

Much of the overall growth comes from growth in Internet usage, and in the early years there is also substantial growth in browser market share.

An analysis that attempts to connect Firefox usage with reported MFSAs will appear shortly (it would be surprising if fault report rate scaled linearly with end-user usage).

Recent Comments