Algorithm complexity and implementation LOC

As computer functionality increases, it becomes easier to write programs to handle more complicated problems which require more computing resources; also, the low-hanging fruit has been picked and researchers need to move on. In some cases, the complexity of existing problems continues to increase.

The Linux kernel is an example of a solution to a problem that continues to increase in complexity, as measured by the number of lines of code.

The distribution of problem complexities will vary across application domains. Treating program size as a proxy for problem complexity is more believable when applied to one narrow application domain.

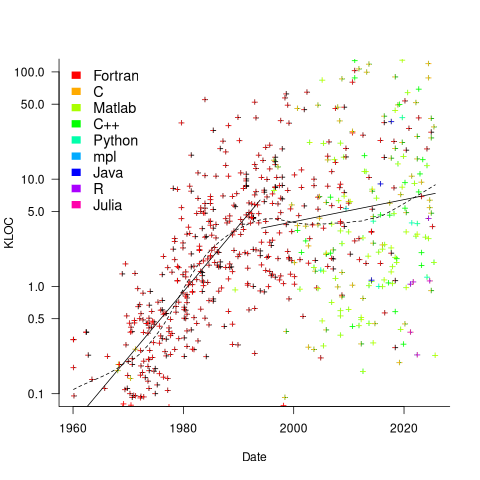

Since 1960, the journal Transactions on Mathematical Software has been making available the source code of implementations of the algorithms provided with the papers it publishes (before the early 1970s they were known as the Collected Algorithms of the ACM, and included more general algorithms). The plot below shows the number of lines of code in the source of the 893 published implementations over time, with fitted regression lines, in black, of the form  before 1994-1-1, and

before 1994-1-1, and  after that date (black dashed line is a LOESS regression model; code+data).

after that date (black dashed line is a LOESS regression model; code+data).

The two immediately obvious patterns are the sharp drop in the average rate of growth since the early 1990s (from 15% per year to 2% per year), and the dominance of Fortran until the early 2000s.

The growth in average implementation LOC might be caused by algorithms becoming more complicated, or because increasing computing resources meant that more code could be produced with the same amount of researcher effort, or another reason, or some combination. After around 2000, there is a significant increase in the variance in the size of implementations. I’m assuming that this is because some researchers focus on niche algorithms, while others continue to work on complicated algorithms.

An aim of Halstead’s early metric work was to create a measure of algorithm complexity.

If LLMs really do make researchers more productive, then in future years LOC growth rate should increase as more complicated problems are studied, or perhaps because LLMs generate more verbose code.

The table below shows the primary implementation language of the algorithm implementations:

Language Implementations

Fortran 465

C 79

Matlab 72

C++ 24

Python 7

R 4

Java 3

Julia 2

MPL 1 |

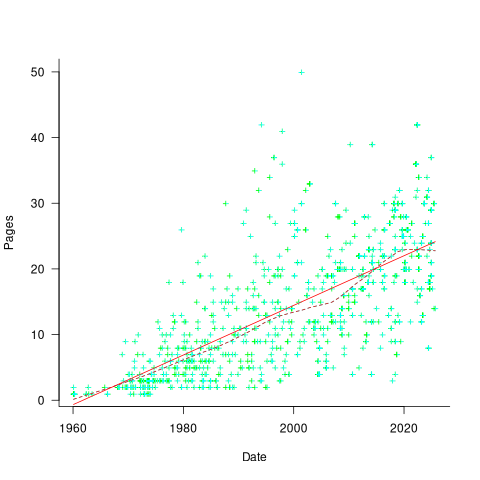

If algorithms are becoming more complicated, then the papers describing/analysing them are likely to contain more pages. The plot below shows the number of pages in the published papers over time, with fitted regression line of the form  (0.38 pages per year; red dashed line is a LOESS regression model; code+data).

(0.38 pages per year; red dashed line is a LOESS regression model; code+data).

Unlike the growth of implementation LOC, there is no break-point in the linear growth of page count. Yes, page count is influence by factors such as long papers being less likely to be accepted, and being able to omit details by citing prior research.

It would be a waste of time to suggest more patterns of behavior without looking at a larger sample papers and their implementations (I have only looked at a handful).

When the source was distributed in several formats, the original one was used. Some algorithms came with build systems that included tests, examples and tutorials. The contents of the directories: CALGO_CD, drivers, demo, tutorial, bench, test, examples, doc were not counted.

Interesting analysis. How you think the choice of language affects these results? For example, I can implement a lot more functionally is 100 lines of python than I can in 100 lines of C.

@Blaine

Good point. Your question also applies to Matlab, which combined with Python and R is around 10% of the sample. Fortran 90/95 supports many of the vector operations that simplifies the implementation of numeric code, and there are 63 files having a f90/f95 suffix. So even more cases where they may be LOC ‘shrinkage’.

I think that vector operator support in the language could have a big impact on the smaller files that focus on just the core algorithm. Much larger programs tend to have a lot of non-vector support code around the core algorithm, so I would expect the language impact to be small.

There is also the issue of programming competence. My experience of numeric code written by mathematicians is that a lot of it is, shall we say, simplistic, i.e., no fancy use of language features. A few are very sophisticated users of a language.

The wide variance of behavior after the early 1990s suggests that lots of things are going on.