Archive

Experimental Psychology by Robert S. Woodworth

I have just discovered “Experimental Psychology” by Robert S. Woodworth; first published in 1938, I have a reprinted in Great Britain copy from 1951. The Internet Archive has a copy of the 1954 revised edition; it’s a very useful pdf, but it does not have the atmospheric musty smell of an old book.

The Archives of Psychology was edited by Woodworth and contain reports of what look like ground breaking studies done in the 1930s.

The book is surprisingly modern, in that the topics covered are all of active interest today, in fields related to cognitive psychology. There are lots of experimental results (which always biases me towards really liking a book) and the coverage is extensive.

The history of cognitive psychology, as I understood it until this week, was early researchers asking questions, doing introspection and sometimes running experiments in the late 1800s and early 1900s (e.g., Wundt and Ebbinghaus), behaviorism dominants the field, behaviorism is eviscerated by Chomsky in the 1960s and cognitive psychology as we know it today takes off.

Now I know that lots of interesting and relevant experiments were being done in the 1920s and 1930s.

What is missing from this book? The most obvious omission is equations; lots of data points plotted on graph paper, but no attempt to fit an equation to anything, e.g., an exponential curve to the rate of learning.

A more subtle omission is the world view; digital computers had not been invented yet and Shannon’s information theory was almost 20 years in the future. Researchers tend to be heavily influenced by the tools they use and the zeitgeist. Computers as calculators and information processors could not be used as the basis for models of the human mind; they had not been invented yet.

Historians of computing

Who are the historians of computing? The requirement I used for deciding who qualifies (for this post), is that the person has written multiple papers on the subject over a period that is much longer than their PhD thesis (several people have written a history of some aspect of computing PhDs, and then gone on to research other areas).

Maarten Bullynck. An academic who is a historian of mathematics and has become interested in software; use HAL to find his papers, e.g., What is an Operating System? A historical investigation (1954–1964).

Martin Campbell-Kelly. An academic who has spent his research career investigating computing history, primarily with a software orientation. Has written extensively on a wide variety of software topics. His book “From Airline Reservations to Sonic the Hedgehog: A History of the Software Industry” is still on my pile of books waiting to be read (but other historian cite it extensively). His thesis: “Foundations of computer programming in Britain, 1945-55″, can be freely downloadable from the British Library; registration required.

Paul E. Ceruzzi. An academic and museum curator; interested in aeronautics and computers. I found the one book of his that I have read, ok; others might like it more than me. Others cite him, and he wrote an interesting paper on Konrad Zuse (The Early Computers of Konrad Zuse, 1935 to 1945).

James W. Cortada. Ex-IBM (1974-2012) and now working at the Charles Babbage Institute. Written extensively on the history of computing. More of a hardware than software orientation. Written lots of detail oriented books and must have pole position for most extensive collection of material to cite (his end notes are very extensive). His “The Digital Flood: The Diffusion of Information Technology Across the U.S., Europe, and Asia” is likely to be the definitive work on the subject for some time to come. For me this book is spoiled by the author towing the company line in his analysis of the IBM antitrust trial; my analysis of the work Cortada cites reaches the opposite conclusion.

Nathan Ensmenger. An academic; more of a people person than hardware/software. His paper Letting the Computer Boys Take Over contains many interesting insights. His book The Computer Boys Take Over Computers, Programmers, and the Politics of Technical Expertise is a combination of topics that have been figured out, backed-up with references, and topics still being figured out (I wish he would not cite Datamation, a trade mag back in the day, so often).

Kenneth S. Flamm. An academic who has held senior roles in government. Writes from a industry evolution, government interests, economic perspective. The books: “Targeting the Computer: Government Support and International Competition” and “Creating the Computer: Government, Industry and High Technology” are packed with industry related economic data and covers all the major industrial countries.

Michael S. Mahoney. An academic who is sadly no longer with us. A historian of mathematics before becoming primarily involved with software history.

Jeffrey R. Yost. An academic. I have only read his book “Making IT Work: A history of the computer services industry”, which was really a collection of vignettes about people, companies and events; needs some analysis. Must try to track down some of his papers (which are not available via his web page :-(.

Who have I missed? This list is derived from papers/books I have encountered while working on a book, not an active search for historians. Suggestions welcome.

Updates

Completely forgot Kenneth S. Flamm, despite enjoying both his computer books.

Forgot Paul E. Ceruzzi because I was unimpressed by his “A History of Modern Computing”. Perhaps his other books are better.

Compiler validation is now part of history

Compiler validation makes sense in a world where there are many different hardware platforms, each with their own independent compilers (third parties often implemented compilers for popular platforms, competing against the hardware vendor). A large organization that spends hundreds of millions on a multitude of computer systems (e.g., the U.S. government) wants to keep prices down, which means the cost of porting its software to different platforms needs to be kept down (or at least suppliers need to think it will not cost too much to switch hardware).

A crucial requirement for source code portability is that different compilers be able to compile the same source, generating code that produces the same behavior. The same behavior requirement is an issue when the underlying word-size varies or has different alignment requirements (lots of code relies on data structures following particular patterns of behavior), but management on all sides always seems to think that being able to compile the source is enough. Compilers vendors often supported extensions to the language standard, and developers got to learn they were extensions when porting to a different compiler.

The U.S. government funded a conformance testing service, and paid for compiler validation suites to be written (source code for what were once the Cobol 85, Fortran 78 and SQL validation suites). While it was in business, this conformance testing service was involved C compiler validation, but it did not have to fund any development because commercial test suites were available.

The 1990s was the mass-extinction decade for companies selling non-Intel hardware. The widespread use of Open source compilers, coupled with the disappearance of lots of different cpus (porting compilers to new vendor cpus was always a good money spinner, for the compiler writing cottage industry), meant that many compilers disappeared from the market.

These days, language portability issues have been essentially solved by a near monoculture of compilers and cpus. It’s the libraries that are the primary cause of application portability problems. There is a test suite for POSIX and Linux has its own tests.

There are companies selling compiler C/C++ test suites (e.g., Perennial and PlumHall); when maintaining a compiler, it’s cost-effective to have a set of third-party tests designed to exercise all the language.

The OpenGroup offer to test your C compiler and issue a brand certificate if it passes the tests.

Source code portability requires compilers to have the same behavior and traditionally the generally accepted behavior has been defined by an ISO Standard or how one particular implementation behaved. In an Open source world, behavior is defined by what needs to be done to run the majority of existing code. Does it matter if Open source compilers evolve in a direction that is different from the behavior specified in an ISO Standard? I think not, it makes no difference to the majority of developers; but be careful, saying this can quickly generate a major storm in a tiny teacup.

First use of: software, software engineering and source code

While reading some software related books/reports/articles written during the 1950s, I suddenly realized that the word ‘software’ was not being used. This set me off looking for the earliest use of various computer terms.

My search process consisted of using pfgrep on my collection of pdfs of documents from the 1950s and 60s, and looking in the index of the few old computer books I still have.

Software: The Oxford English Dictionary (OED) cites an article by John Tukey published in the American Mathematical Monthly during 1958 as the first published use of software: “The ‘software’ comprising … interpretive routines, compilers, and other aspects of automotive programming are at least as important to the modern electronic calculator as its ‘hardware’.”

I have a copy of the second edition of “An Introduction to Automatic Computers” by Ned Chapin, published in 1963, which does a great job of defining the various kinds of software. Earlier editions were published in 1955 and 1957. Did these earlier edition also contain various definitions of software? I cannot find any reasonably prices copies on the second-hand book market. Do any readers have a copy?

Update: I now have a copy of the 1957 edition of Chapin’s book. It discusses programming, but there is no mention of software.

Software engineering: The OED cites a 1966 “letter to the ACM membership” by Anthony A. Oettinger, then ACM President: “We must recognize ourselves … as members of an engineering profession, be it hardware engineering or software engineering.”

The June 1965 issue of COMPUTERS and AUTOMATION, in its Roster of organizations in the computer field, has the list of services offered by Abacus Information Management Co.: “systems software engineering”, and by Halbrecht Associates, Inc.: “software engineering”. This pushes the first use of software engineering back by a year.

Source code: The OED cites a 1965 issue of Communications ACM: “The PUFFT source language listing provides a cross-reference between the source code and the object code.”

The December 1959 Proceedings of the EASTERN JOINT COMPUTER CONFERENCE contains the article: “SIMCOM – The Simulator Compiler” by Thomas G. Sanborn. On page 140 we have: “The compiler uses this convention to aid in distinguishing between SIMCOM statements and SCAT instructions which may be included in the source code.”

Update: The October 1956 issue of Computers and Automation contains an extensive glossary. It does not have any entries for software or source code.

Running pdfgrep over the archive of documents on bitsavers would probably throw up all manners of early users of software related terms.

The paper: What’s in a name? Origins, transpositions and transformations of the triptych Algorithm -Code -Program is a detailed survey of the use of three software terms.

A 1931 article using the term Super Computing machine to refer to a Punched card machine that could do arithmetic on numeric values contained on the card.

Computer books your great grandfather might have read

I have been reading two very different computer books written for a general readership: Giant Brains or Machines that Think, published in 1949 (with a retrospective chapter added in 1961) and LET ERMA DO IT, published in 1956.

‘Giant Brains’ by Edmund Berkeley, was very popular in its day.

Berkeley marvels at a computer performing 5,000 additions per second; performing all the calculations in a week that previously required 500 human computers (i.e., people using mechanical adding machines) working 40 hours per week. His mind staggers at the “calculating circuits being developed” that can perform 100,00 additions a second; “A mechanical brain that can do 10,000 additions a second can very easily finish almost all its work at once.”

The chapter discussing the future, “Machines that think, and what they might do for men”, sees Berkeley struggling for non-mathematical applications; a common problem with all new inventions. Automatic translator and automatic stenographer (typist who transcribe dictation) are listed. There is also a chapter on social control, which is just as applicable today.

This was the first widely read book to promote Shannon‘s idea of using the algebra invented by George Boole to analyze switching circuits symbolically (THE 1940 Masters thesis).

The ‘ERMA’ book paints a very rosy picture of the future with computer automation removing the drudgery that so many jobs require; it is so upbeat. A year later the USSR launched Sputnik and things suddenly looked a lot less rosy.

Added two more books

Cybernetics Or Communication And Control In The Animal And The Machine by Norbert Wiener

The Organization Of Behavior A Neuropsychological Theory by D. O. Hebb

and another

The Preparation of Programs for an Electronic Digital Computer by Wilkes, Wheeler, Gill (the link is to the 1957 second edition, not the 1951 first edition)

The first compiler was implemented in itself

I have been reading about the world’s first actual compiler (i.e., not a paper exercise), described in Corrado Böhm’s PhD thesis (French version from 1954, an English translation by Peter Sestoft). The thesis, submitted in 1951 to the Federal Technical University in Zurich, takes some untangling; when you are inventing a new field, ideas tend to be expressed using existing concepts and terminology, e.g., computer peripherals are called organs and registers are denoted by the symbol  .

.

Böhm had work with Konrad Zuse and must have known about his language, Plankalkül. The language also has a APL feel to it (but without the vector operations).

Böhm’s language does not have a name, his thesis is really about translating mathematical expressions to machine code; the expressions are organised by what we today call basic blocks (Böhm calls them groups). The compiler for the unnamed language (along with a loader) is written in itself; a Java implementation is being worked on.

Böhm’s work is discussed in Donald Knuth’s early development of programming languages, but there is nothing like reading the actual work (if only in translation) to get a feel for it.

Update (3 days later): Correspondence with Donald Knuth.

Update (3 days later): A January 1949 memo from Haskell Curry (he of Curry fame and more recently of Haskell association) also uses the term organ. Might we claim, based on two observations on different continents, that it was in general use?

Evidence for 28 possible compilers in 1957

In two earlier posts I discussed the early compilers for languages that are still widely used today and a report from 1963 showing how nothing has changed in programming languages

The Handbook of Automation Computation and Control Volume 2, published in 1959, contains some interesting information. In particular Table 23 (below) is a list of “Automatic Coding Systems” (containing over 110 systems from 1957, or which 54 have a cross in the compiler column):

Computer System Name or Developed by Code M.L. Assem Inter Comp Oper-Date Indexing Fl-Pt Symb. Algeb.

Acronym

IBM 704 AFAC Allison G.M. C X Sep 57 M2 M 2 X

CAGE General Electric X X Nov 55 M2 M 2

FORC Redstone Arsenal X Jun 57 M2 M 2 X

FORTRAN IBM R X Jan 57 M2 M 2 X

NYAP IBM X Jan 56 M2 M 2

PACT IA Pact Group X Jan 57 M2 M 1

REG-SYMBOLIC Los Alamos X Nov 55 M2 M 1

SAP United Aircraft R X Apr 56 M2 M 2

NYDPP Servo Bur. Corp. X Sep 57 M2 M 2

KOMPILER3 UCRL Livermore X Mar 58 M2 M 2 X

IBM 701 ACOM Allison G.M. C X Dec 54 S1 S 0

BACAIC Boeing Seattle A X X Jul 55 S 1 X

BAP UC Berkeley X X May 57 2

DOUGLAS Douglas SM X May 53 S 1

DUAL Los Alamos X X Mar 53 S 1

607 Los Alamos X Sep 53 1

FLOP Lockheed Calif. X X X Mar 53 S 1

JCS 13 Rand Corp. X Dec 53 1

KOMPILER 2 UCRL Livermore X Oct 55 S2 1 X

NAA ASSEMBLY N. Am. Aviation X

PACT I Pact Groupb R X Jun 55 S2 1

QUEASY NOTS Inyokern X Jan 55 S

QUICK Douglas ES X Jun 53 S 0

SHACO Los Alamos X Apr 53 S 1

SO 2 IBM X Apr 53 1

SPEEDCODING IBM R X X Apr 53 S1 S 1

IBM 705-1, 2 ACOM Allison G.M. C X Apr 57 S1 0

AUTOCODER IBM R X X X Dec 56 S 2

ELI Equitable Life C X May 57 S1 0

FAIR Eastman Kodak X Jan 57 S 0

PRINT I IBM R X X X Oct 56 82 S 2

SYMB. ASSEM. IBM X Jan 56 S 1

SOHIO Std. Oil of Ohio X X X May 56 S1 S 1

FORTRAN IBM-Guide A X Nov 58 S2 S 2 X

IT Std. Oil of Ohio C X S2 S 1 X

AFAC Allison G.M. C X S2 S 2 X

IBM 705-3 FORTRAN IBM-Guide A X Dec 58 M2 M 2 X

AUTOCODER IBM A X X Sep 58 S 2

IBM 702 AUTOCODER IBM X X X Apr 55 S 1

ASSEMBLY IBM X Jun 54 1

SCRIPT G. E. Hanford R X X X X Jul 55 Sl S 1

IBM 709 FORTRAN IBM A X Jan 59 M2 M 2 X

SCAT IBM-Share R X X Nov 58 M2 M 2

IBM 650 ADES II Naval Ord. Lab X Feb 56 S2 S 1 X

BACAIC Boeing Seattle C X X X Aug 56 S 1 X

BALITAC M.I.T. X X X Jan 56 Sl 2

BELL L1 Bell Tel. Labs X X Aug 55 Sl S 0

BELL L2,L3 Bell Tel. Labs X X Sep 55 Sl S 0

DRUCO I IBM X Sep 54 S 0

EASE II Allison G.M. X X Sep 56 S2 S 2

ELI Equitable Life C X May 57 Sl 0

ESCAPE Curtiss-Wright X X X Jan 57 Sl S 2

FLAIR Lockheed MSD, Ga. X X Feb 55 Sl S 0

FOR TRANSIT IBM-Carnegie Tech. A X Oct 57 S2 S 2 X

IT Carnegie Tech. C X Feb 57 S2 S 1 X

MITILAC M.I.T. X X Jul 55 Sl S 2

OMNICODE G. E. Hanford X X Dec 56 Sl S 2

RELATIVE Allison G.M. X Aug 55 Sl S 1

SIR IBM X May 56 S 2

SOAP I IBM X Nov 55 2

SOAP II IBM R X Nov 56 M M 2

SPEED CODING Redstone Arsenal X X Sep 55 Sl S 0

SPUR Boeing Wichita X X X Aug 56 M S 1

FORTRAN (650T) IBM A X Jan 59 M2 M 2

Sperry Rand 1103A COMPILER I Boeing Seattle X X May 57 S 1 X

FAP Lockheed MSD X X Oct 56 Sl S 0

MISHAP Lockheed MSD X Oct 56 M1 S 1

RAWOOP-SNAP Ramo-Wooldridge X X Jun 57 M1 M 1

TRANS-USE Holloman A.F.B. X Nov 56 M1 S 2

USE Ramo-Wooldridge R X X Feb 57 M1 M 2

IT Carn. Tech.-R-W C X Dec 57 S2 S 1 X

UNICODE R Rand St. Paul R X Jan 59 S2 M 2 X

Sperry Rand 1103 CHIP Wright A.D.C. X X Feb 56 S1 S 0

FLIP/SPUR Convair San Diego X X Jun 55 SI S 0

RAWOOP Ramo-Wooldridge R X Mar 55 S1 1

8NAP Ramo-Wooldridge R X X Aug 55 S1 S 1

Sperry Rand Univac I and II AO Remington Rand X X X May 52 S1 S 1

Al Remington Rand X X X Jan 53 S1 S 1

A2 Remington Rand X X X Aug 53 S1 S 1

A3,ARITHMATIC Remington Rand C X X X Apr 56 SI S 1

AT3,MATHMATIC Remington Rand C X X Jun 56 SI S 2 X

BO,FLOWMATIC Remington Rand A X X X Dec 56 S2 S 2

BIOR Remington Rand X X X Apr 55 1

GP Remington Rand R X X X Jan 57 S2 S 1

MJS (UNIVAC I) UCRL Livermore X X Jun 56 1

NYU,OMNIFAX New York Univ. X Feb 54 S 1

RELCODE Remington Rand X X Apr 56 1

SHORT CODE Remington Rand X X Feb 51 S 1

X-I Remington Rand C X X Jan 56 1

IT Case Institute C X S2 S 1 X

MATRIX MATH Franklin Inst. X Jan 58

Sperry Rand File Compo ABC R Rand St. Paul Jun 58

Sperry Rand Larc K5 UCRL Livermore X X M2 M 2 X

SAIL UCRL Livermore X M2 M 2

Burroughs Datatron 201, 205 DATACODEI Burroughs X Aug 57 MS1 S 1

DUMBO Babcock and Wilcox X X

IT Purdue Univ. A X Jul 57 S2 S 1 X

SAC Electrodata X X Aug 56 M 1

UGLIAC United Gas Corp. X Dec 56 S 0

Dow Chemical X

STAR Electrodata X

Burroughs UDEC III UDECIN-I Burroughs X 57 M/S S 1

UDECOM-3 Burroughs X 57 M S 1

M.I.T. Whirlwind ALGEBRAIC M.I.T. R X S2 S 1 X

COMPREHENSIVE M.I.T. X X X Nov 52 Sl S 1

SUMMER SESSION M.I.T. X Jun 53 Sl S 1

Midac EASIAC Univ. of Michigan X X Aug 54 SI S

MAGIC Univ. of Michigan X X X Jan 54 Sl S

Datamatic ABC I Datamatic Corp. X

Ferranti TRANSCODE Univ. of Toronto R X X X Aug 54 M1 S

Illiac DEC INPUT Univ. of Illinois R X Sep 52 SI S

Johnniac EASY FOX Rand Corp. R X Oct 55 S

Norc NORC COMPILER Naval Ord. Lab X X Aug 55 M2 M

Seac BASE 00 Natl. Bur. Stds. X X

UNIV. CODE Moore School X Apr 55 |

Chart Symbols used:

Code R = Recommended for this computer, sometimes only for heavy usage. C = Common language for more than one computer. A = System is both recommended and has common language. Indexing M = Actual Index registers or B boxes in machine hardware. S = Index registers simulated in synthetic language of system. 1 = Limited form of indexing, either stopped undirectionally or by one word only, or having certain registers applicable to only certain variables, or not compound (by combination of contents of registers). 2 = General form, any variable may be indexed by anyone or combination of registers which may be freely incremented or decremented by any amount. Floating point M = Inherent in machine hardware. S = Simulated in language. Symbolism 0 = None. 1 = Limited, either regional, relative or exactly computable. 2 = Fully descriptive English word or symbol combination which is descriptive of the variable or the assigned storage. Algebraic A single continuous algebraic formula statement may be made. Processor has mechanisms for applying associative and commutative laws to form operative program. M.L. = Machine language. Assem. = Assemblers. Inter. = Interpreters. Compl. = Compilers. |

Are the compilers really compilers as we know them today, or is this terminology that has not yet settled down? The computer terminology chapter refers readers interested in Assembler, Compiler and Interpreter to the entry for Routine:

“Routine. A set of instructions arranged in proper sequence to cause a computer to perform a desired operation or series of operations, such as the solution of a mathematical problem.

…

Compiler (compiling routine), an executive routine which, before the desired computation is started, translates a program expressed in pseudo-code into machine code (or into another pseudo-code for further translation by an interpreter).

…

Assemble, to integrate the subroutines (supplied, selected, or generated) into the main routine, i.e., to adapt, to specialize to the task at hand by means of preset parameters; to orient, to change relative and symbolic addresses to absolute form; to incorporate, to place in storage.

…

Interpreter (interpretive routine), an executive routine which, as the computation progresses, translates a stored program expressed in some machine-like pseudo-code into machine code and performs the indicated operations, by means of subroutines, as they are translated. …”

The definition of “Assemble” sounds more like a link-load than an assembler.

When the coding system has a cross in both the assembler and compiler column, I suspect we are dealing with what would be called an assembler today. There are 28 crosses in the Compiler column that do not have a corresponding entry in the assembler column; does this mean there were 28 compilers in existence in 1957? I can imagine many of the languages being very simple (the fashionability of creating programming languages was already being called out in 1963), so producing a compiler for them would be feasible.

The citation given for Table 23 contains a few typos. I think the correct reference is: Bemer, Robert W. “The Status of Automatic Programming for Scientific Problems.” Proceedings of the Fourth Annual Computer Applications Symposium, 107-117. Armour Research Foundation, Illinois Institute of Technology, Oct. 24-25, 1957.

Programming Languages: nothing changes

While rummaging around today I came across: Programming Languages and Standardization in Command and Control by J. P. Haverty and R. L. Patrick.

Much of this report could have been written in 2013, but it was actually written fifty years earlier; the date is given away by “… the effort to develop programming languages to increase programmer productivity is barely eight years old.”

Much of the sound and fury over programming languages is the result of zealous proponents touting them as the solution to the “programming problem.”

I don’t think any major new sources of sound and fury have come to the fore.

… the designing of programming languages became fashionable.

Has it ever gone out of fashion?

Now the proliferation of languages increased rapidly as almost every user who developed a minor variant on one of the early languages rushed into publication, with the resultant sharp increase in acronyms. In addition, some languages were designed practically in vacuo. They did not grow out of the needs of a particular user, but were designed as someone’s “best guess” as to what the user needed (in some cases they appeared to be designed for the sake of designing).

My post on this subject was written 49 years later.

…a computer user, who has invested a million dollars in programming, is shocked to find himself almost trapped to stay with the same computer or transistorized computer of the same logical design as his old one because his problem has been written in the language of that computer, then patched and repatched, while his personnel has changed in such a way that nobody on his staff can say precisely what the data processing Job is that his machine is now doing with sufficient clarity to make it easy to rewrite the application in the language of another machine.

Vendor lock-in has always been good for business.

Perhaps the most flagrantly overstated claim made for POLs is that they result in better understanding of the programming operation by higher-level management.

I don’t think I have ever heard this claim made. But then my programming experience started a bit later than this report.

… many applications do not require the services of an expert programmer.

Ssshh! Such talk is bad for business.

The cost of producing a modern compiler, checking it out, documenting it, and seeing it through initial field use, easily exceeds $500,000.

For ‘big-company’ written compilers this number has not changed much over the years. Of course man+dog written compilers are a lot cheaper and new companies looking to aggressively enter the market can spend an order of magnitude more.

In a young rapidly growing field major advances come so quickly or are so obvious that instituting a measurement program is probably a waste of time.

True.

At some point, however, as a field matures, the costs of a major advance become significant.

Hopefully this point in time has now arrived.

Language design is still as much an art as it is a science. The evaluation of programming languages is therefore much akin to art criticism–and as questionable.

Calling such a vanity driven activity an ‘art’ only helps glorify it.

Programming languages represent an attack on the “programming problem,” but only on a portion of it–and not a very substantial portion.

In fact, it is probably very insubstantial.

Much of the “programming problem” centers on the lack of well-trained experienced people–a lack not overcome by the use of a POL.

Nothing changes.

The following table is for those of you complaining about how long it takes to compile code these days. I once had to compile some Coral 66 on an Elliot 903, the compiler resided in 5(?) boxes of paper tape, one box per compiler pass. Compilation involved: reading the paper tape containing the first pass into the computer, running this program and then reading the paper tape containing the program source, then reading the second paper tape containing the next compiler pass (there was not enough memory to store all compiler passes at once), which processed the in-memory output of the first pass; this process was repeated for each successive pass, producing a paper tape output of the compiled code. I suspect that compiling on the machines listed gave the programmers involved plenty of exercise and practice splicing snapped paper-tape.

Computer Cobol statements Machine instructions Compile time (mins) UNIVAC II 630 1,950 240 RCA 501 410 2,132 72 GE 225 328 4,300 16 IBM 1410 174 968 46 NCR 304 453 804 40 |

Array bound checking in C: the early products

Tools to support array bound checking in C have been around for almost as long as the language itself.

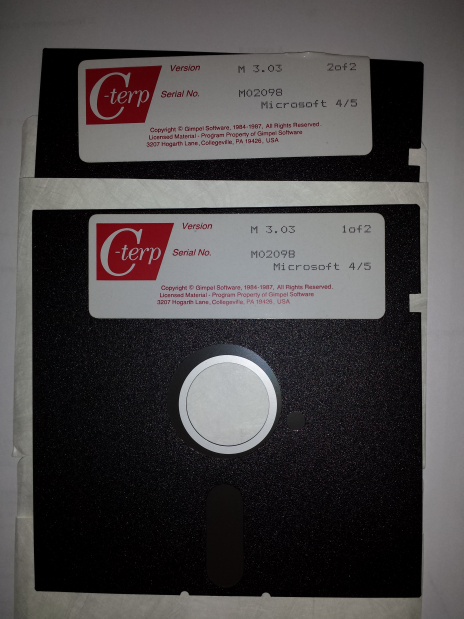

I recently came across product disks for C-terp; at 360k per 5 1/4 inch floppy, that is a C compiler, library and interpreter shipped on 720k of storage (the 3.5 inch floppies with 720k and then 1.44M came along later; Microsoft 4/5 is the version of MS-DOS supported). This was Gimpel Software’s first product in 1984.

The Model Implementation C checker work was done in the late 1980s and validated in 1991.

Purify from Pure Software was a well-known (at the time) checking tool in the Unix world, first available in 1991. There were a few other vendors producing tools on the back of Purify’s success.

Richard Jones (no relation) added bounds checking to gcc for his PhD. This work was done in 1995.

As a fan of bounds checking (finding bugs early saves lots of time) I was always on the lookout for C checking tools. I would be interested to hear about any bounds checking tools that predated Gimpel’s C-terp; as far as I know it was the first such commercially available tool.

Early compiler history

Who wrote the early compilers, when did they do it, and what were the languages compiled?

Answering these questions requires defining what we mean by ‘compiler’ and deciding what counts as a language.

Donald Knuth does a masterful job of covering the early development of programming languages. People were writing programs in high level languages well before the 1950s, and Konrad Zuse is the forgotten pioneer from the 1940s (it did not help that Germany had just lost a war and people were not inclined to given German’s much public credit).

What is the distinction between a compiler and an assembler? Some early ‘high-level’ languages look distinctly low-level from our much later perspective; some assemblers support fancy subroutine and macro facilities that give them a high-level feel.

Glennie’s Autocode is from 1952 and depending on where the compiler/assembler line is drawn might be considered a contender for the first compiler. The team led by Grace Hopper produced A-0, a fancy link-loader, in 1952; they called it a compiler, but today we would not consider it to be one.

Talking of Grace Hopper, from a biography of her technical contributions she sounds like a person who could be technical but chose to be management.

Fortran and Cobol hog the limelight of early compiler history.

Work on the first Fortran compiler started in the summer of 1954 and was completed 2.5 years later, say the beginning of 1957. A very solid claim to being the first compiler (assuming Autocode and A-0 are not considered to be compilers).

Compilers were created for Algol 58 in 1958 (a long dead language implemented in Germany with the war still fresh in people’s minds; not a recipe for wide publicity) and 1960 (perhaps the first one-man-band implemented compiler; written by Knuth, an American, but still a long dead language). The 1958 implementation sounds like it has a good claim to being the second compiler after the Fortran compiler.

In December 1960 there were at least two Cobol compilers in existence (one of which was created by a team containing Grace Hopper in a management capacity). I think the glory attached to early Cobol compiler work is the result of having a female lead involved in the development team of one of these compilers. Why isn’t the other Cobol compiler ever mentioned? Why is the Algol 58 compiler implementation that occurred before the Cobol compiler ever mentioned?

What were the early books on compiler writing?

“Algol 60 Implementation” by Russell and Randell (from 1964) still appears surprisingly modern.

Principles of Compiler Design, the “Dragon book”, came out in 1977 and was about the only easily obtainable compiler book for many years. The first half dealt with the theory of parser generators (the audience was CS undergraduates and parser generation used to be a very trendy topic with umpteen PhD thesis written about it); the book morphed into Compilers: Principles, Techniques, and Tools. Old-timers will reminisce about how their copy of the book has a green dragon on the front, rather than the red dragon on later, trendier (with less parser theory and more code generation) editions.

Recent Comments