Archive

Investigating an LLM generated C compiler

Spending over $20,000 on API calls, a team at Anthropic plus an LLM (Claude Opus version 4.6) wrote a C compiler capable of compiling the Linux kernel and other programs to a variety of cpus. Has Anthropic commercialised monkeys typing on keyboards, or have they created an effective sheep herder?

First of all, does this compiler handle a non-trivial amount of the C language?

Having written a variety of industrial compiler front ends, optimizers, code generators and code analysers (which paid off the mortgage on my house), along with a book that analysed of the C Standard, sentence by sentence (download the pdf), I’m used to finding my way around compilers.

Claude’s C compiler source appears to be surprisingly well written/organised (based on a few hours reading of the code). My immediate thought was that this must be regurgitation of pieces from existing compilers. Searches for a selection of comments in the source failed to find any matches. Stylistically, the code is written by an entity that totally believes in using abstractions; functions call functions that call functions, that call …, eventually arriving at a leaf function that just assigns a value. Not at all like a human C compiler writer (well, apart from this one).

There are some oddities in an implementation of this (small) size. For instance, constant folding includes support for floating-point literals. Use of floating-point is uncommon, and opportunities to fold literals rare. Perhaps this support was included because, well, an LLM did the work. But it increases the amount of code that can be incorrect, for little benefit. When writing a compiler in an implementation language different from the one being compiled, differences between the two languages can have an impact. For instance, Claude C uses Rust’s 128-bit integer type during constant folding, despite this and most other C compilers only supporting at most 64-bit integer types.

A README appears in each of the 32 source directories, giving a detailed overview of the design and implementation of the activities performed by the code. The average length is 560 lines. These READMEs look like edited versions of the prompts used.

To get a sense of how the compiler handled rarely used language features and corner cases, I fed it examples from my book (code). The Complex floating point type is supported, along with Universal Character Names, fiddly scoping rules, and preprocessor oddities. This compiler is certainly non-trivial.

The compiler’s major blind spot is failing to detect many semantic constraints, e.g., performing arithmetic on variables having a struct type, or multiple declarations of functions and variables with the same name in the same scope (the parser README says “No type checking during parsing”; no type checking would be more accurate). The training data is source code that compiles to machine code, i.e., does not contain any semantic errors that a compiler is required to flag. It’s not surprising that Claude C fails to detect many semantic errors. There is a freely available collection of tests for the 80 constraint clauses in the C Standard that can be integrated into the Claude C compiler test suite, including the prompts used to generate the tests.

A compiler is an information conveyor belt. Source is first split into tokens (based on language specific rules), which are parsed to build a tree representation and a symbol table, which is then converted to SSA form so that a sequence of established algorithms can be used to lower the level of abstraction and detect common optimizations patterns, the low-level representation is mapped to machine code, and written to a file in the format for an executable program.

The prompts used to orchestrate the information processing conveyor belt have not been released. I’m guessing that the human team prompted the LLM with a detailed specification of the interfaces between each phase of the compiler.

The compiler is implemented in Rust, the currently fashionable language, and the obvious choice for its PR value. The 106K of source is spread across 351 files (average 531 LOC), and built in 17.5 seconds on my system.

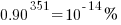

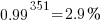

LLMs make mistakes, with coding benchmark success rates being at best around 90%. Based on these numbers, the likelihood of 351 files being correctly generated, at the same time, is  (with 99% probability of correctness we get

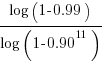

(with 99% probability of correctness we get  ). Splitting the compiler into, say, 32 phases each in a directory containing 11 files, and generating and testing each phase independently significantly increases the probability of success (or alternatively, significantly reduces the number of repetitions of the generate code and test process). The success probability of each phase is:

). Splitting the compiler into, say, 32 phases each in a directory containing 11 files, and generating and testing each phase independently significantly increases the probability of success (or alternatively, significantly reduces the number of repetitions of the generate code and test process). The success probability of each phase is:  , and if the same phase is generated 13 times, i.e.,

, and if the same phase is generated 13 times, i.e.,  , there is a 99% probability that at least one of them is correct.

, there is a 99% probability that at least one of them is correct.

Some code need not do anything other than pass on the flow of information unchanged. For instance, code to perform the optimization common subexpression elimination does exist, but the optimization is not performed (based on looking at the machine code generated for a few tests; see codegen.c). Detecting non-functional code could require more prompting skill than generating the code. The prompt to implementation this optimization (e.g., write Rust code to perform value numbering) is very different from the prompt to write code containing common subexpressions, compile to machine code and check that the optimization is performed.

There is little commenting in the source for the lexer, parser, and machine code generators, i.e., the immediate front end and final back end. There is a fair amount of detailed commenting in source of the intervening phases.

The phases with little commenting are those which require lots of very specific, detailed information that is not often covered in books and papers. I suspect that the prompts for this code contains lots of detailed templates for tokenizing the source, building a tree, and at the back end how to map SSA nodes to specific instruction sequences.

The intermediate phases have more publicly available information that can be referenced in prompts, such as book chapters and particular papers. These prompts would need to be detailed instructions on how to annotate/transform the tree/SSA conveyed from earlier phases.

C compilers on PR1ME computers

At the start of this week I knew almost nothing about the C compilers running on PR1ME computers (every now and again I check bitsavers for newly scanned C compiler manuals).

On Tuesday I spotted the source of the Georgia Tech C Compiler for Prime Computers (which was believed lost until two 35-years old mag tapes were found); the implementation language is Ratfor.

I posted the information to the comp.compilers newsgroup, and in the ensuing posts I learned about Dennis Boone’s collection of scanned PR1ME manuals, including a C compiler user guide. This C user’s guide is for the Conboy/Pacer software compiler, not the one whose sources I linked to above.

The PR1ME C compiler has the usually assortment of vendor extensions, and missing features, of the kind that might be found in any C compiler from the 1980s; however, there is a larger than usual collection of infrequently seen characteristics. I spotted the following during a quick read through the manual:

- addresses point to 16-bit words (not 8-bit bytes). The implementers could have chosen to define a

charas 16-bit (i.e., defining the standard macroCHAR_BITas 16), but they went with 8-bit chars. Handling 8-bit chars on a word addressed processor means that pointers tocharhave to include a bit specifying which half of the 16-bits they are pointing to. Some Cray computers had the same issue, except their words contained 64 bits, so more offset bits had to be stored in pointers.The definition of an object having type

charoccupies 8 bits of a word, no other object is allocated in the other 8 bits; arrays ofcharare packed, two chars to a word. - ASCII characters have the top-bit set (the manual phrases this as “The basic character set … ASCII 7-bit set (called ASCII-7), with the eighth bit turned on.”). The C standard requires that the ten digit characters have continuous values, and that the basic character set be representable in a char.

- a pointer type may contain more bits than any supported integer type, i.e., 48-bit pointer and 32-bit integer. PR1ME cpus supported a dizzying array of different modes and instruction sets; some later instruction sets/modes support 48-bit pointers.

- “On some other machines, you may write code in which a function is called with more or fewer parameters than the function actually expects. Such code may work correctly on the 50 Series, but only if the missing or extra parameter is never referenced.”

- “On some other machines, programs run correctly if function return value data types are left undeclared. … If this function is not explicitly defined as returning a pointer, the default return value is type int. Such a program may run correctly on some machines, but not on a 50 Series machine.”

These characteristics combine to make the PR1ME a very unfriendly environment for C programs.

C programmers have a culture, the C way of doing things (these cultures exist for all languages), and the characteristics of this (and perhaps other) PR1ME C compilers run counter to this culture in many ways.

I’m not saying that C culture is good or bad, just that it exists and PR1ME is a very poor fit.

What elements of C culture clash with the PR1ME implementation?

There is an expectation that when two objects having char type are defined sequentially (e.g., char a, b;), it will be possible to access b by adding 1 to a pointer to a (as if the definition had been written as: char a[2];). Yes, this practice is now frowned upon, but it was once considered ok (at least in some circles). On PR1ME the two definitions are not equivalent, from the pointer arithmetic point of view.

Very many developers assume that C characters use ASCII. Most of the time they do, but they are not required to; EBCDIC being perhaps the most well known alternative. At least in the PR1ME encoding the alphabetic characters have contiguous values, which they don’t in EBCDIC. But setting the top bit, hmmm….

The assumption that sizeof(int) == sizeof(pointer_type) is endemic in 1980s code, and much code in later decades; many (not so young) C programmers will tell you the story of the first time they had to switch mind-sets to: sizeof(long) == sizeof(pointer_type). Not having a 48-bit integer type is a bit of a killer for C on PR1ME, as we will find out.

PR1MOS, the vendor operating system, uses a function call stack layout that assumes a function definition specifies exactly how the function will be called, e.g., the number and type of parameters, and the return type.

In the early decades of C, programmers were very lax about specifying exactly what arguments a function expected, and that no return type implicitly specified an int return type (and since everybody knew that sizeof(int) == sizeof(pointer_type), this meant that it was not necessary to specify pointer return types).

During development, having the program raise an exception, or whatever the behavior was, when a function call did not match the defined type of the function, is useful; it improves program reliability by catching those cases that might work as expected a lot of the time, but not all the time.

A lot of existing code was created on systems that were forgiving of function call/definition mismatches. Such C source is unlikely to just compile and run under PR1MOS, i.e., porting other peoples programs is likely to be a time-consuming process.

Recent Comments