Where are the dead bodies?

The possibility of faults in software causing death or serious injury is often talked about and in some cases large amounts of money are invested in work to reduce the possibility of these events occurring (or at least doing things that will support the view that a company took reasonable precautions, should a case end up in court). The Therac-25 accidents are an often quoted example of a software fault that directly resulted in deaths. These accidents occurred over a 19 month period in the mid 1980s and are believed to have resulted in the death of six people. I don’t wish to disrespect the memory of the people who died, but six people 20 years ago; is that it? Less than the number of people killed every day (around 10) in traffic accidents in the UK.

If faults in software really do have a non-trivial impact on human safety then we would expect this fact to be reflected in accident statistics. After searching the accident statistics for the UK I cannot find any whose cause is directly attributed to software. If there are people who have died as a direct result of faults in software, the death rate has not yet reached the minimum level needed to be recorded as such (or are these deaths ‘hidden’ away in ones and twos within other causes?)

The US National Transportation Safety Board carries out a thorough investigation of all US aviation accidents. Searching the Aviation Accident Database on the query “software” between the dates 1 Jan 2000 and 9 Aug 2005 returns 44 matches. Reading these 44 reports I did not find any accident attributed to a software related issue.

If faults in software are not killing or seriously injuring many people why is so much effort invested in reducing the probability of these events occurring? The following are some of the possibilities:

- The investment actually made is small, but it is talked up.

- The investment is made for economic reasons (e.g., more reliable products are likely to reduce support costs) and increased ’safety’ is a side effect.

- In situations where there is a likelihood of death or serious injury the procedures and reliability of non-software items is sufficient to short-circuit the effects of any life threatening faults that may exist in the software used (at least until the fault can be corrected).

As any developer knows, replicating faulty behavior in software can be very difficult, if not impossible. It may be that software faults are not given as the root cause of death or serious injury because the necessary proof is not available. Or perhaps software faults have yet to be the root cause of such events on any non-trivial scale.

Existing practice affects what people are willing to put up with. Many users of Microsoft Windows now accept that it is necessary to reboot the computer they are using on a daily, or even hourly, basis. Users of cars accept that the tool they are using can result in serious injuries or even death (usually rating nothing more than a story in the local town newspaper). Will there be a public hue and cry once software faults start to be recorded as a primary factor in accidental death or serious injury? As this paper shows, it can take a lot of dead bodies before existing practices are changed.

The lack of dead bodies attributed to a software root cause suggests that it is very still early days for the field of high integrity software development.

This material was originally written in 2005 and appeared in an earlier blog of mine which I did not keep up.

sizeof i++

It is quite common for coding guideline documents to contain at least one guideline recommending against the use of a construct that developers very rarely use, for instance: “The operand of the sizeof operator shall not contain side-effects.”

... sizeof i++; // Is the author expecting i to be incremented? |

Why do such recommendations get incorporated into guideline documents? The obvious answer is that their author(s) are unaware of actual developer usage and believe the recommendation has value.

I have heard people claim that such recommendations are harmless, after all the adherence cost is minimal. Besides, the fact that code very likely already adheres to it will increase the “pass” rate for the set of guidelines the first time developers check their code. However, such guidelines unjustifiably increase peoples confidence in software (as measured by the number of guidelines adhered to). They not only fail to add value to a set of coding guidelines, but their presence could result in the probity of the other guidelines being questioned.

I continue to be surprised by the amount of resistance encountered by my attempts to have the “sizeof” guideline removed from, or not included in, a set of coding guidelines. In the case of an existing set of guidelines there is obviously a resistance to change, but I have not yet managed to extract a single promise to consider removing the guideline in a future revision.

People seem unimpressed by the amount of source code I have searched in a vain attempt to find a violation of the “sizeof” guideline, but they often have some vague memory of having seen an instance of this elusive usage. My questions asking after the name of the source file, the name of the program, the name of the project, or simply the name of the company they were working for at the time are greeted with uncertainty. Perhaps the only instance they have seen is in the example underneath the text of the recommendation? Growls and pointed looks.

Another factor is existing practice, if it appears in other guideline documents it must have some benefit. People don’t want to go out on a limb. Besides, basing decisions on measurement of source code, who does that these days?

Whatever else might be said about the “sizeof” guideline, it does make a great example that developers can use to regale management.

Superior tone: Less experienced developers

Earnest voice: don’t always have a complete understanding of C/C++

Shock: They make the mistake of thinking

Talking very fast: that the code sizeof i++

Incredulity: will cause i to be incremented!

Emphasis: This guideline

Relieved voice: ensures that this mistake os warned about.

This article originally appeared in an earlier blog of mine which I did not keep up.

Compiler writing: The career path to World domination

Compiler writing is not usually thought of as a career path that leads to becoming Ruler of the World. Perhaps this is because compiler writing is a relatively new profession and us compiler writers are still toiling in obscurity awaiting the new dawn.

What might be a workable plan for a compiler writer to become Ruler of the World? One possibility is to write a compiler for the language in which most of the World’s critical software is written (i.e., C) and for that compiler to become the one that the vendors of this critical software all use (i.e., gcc). This compiler needs to do more that just compile the source code it is feed, it also needs to generate code that creates a backdoor in important programs (e.g., the login program).

But, you say, this cannot happen with gcc because its source is available for everybody to read (and spot any backdoor generator). In his 1984 Turing acceptance lecture Ken Thompson showed how a compiler could contain a backdoor that was not visible in its source. The idea is for the compiler writer to modify a compiler to detect when it is being used to compile itself and to insert the backdoor generating code into its own executable. This modified compiler is then used to compile itself and the resulting executable made the default compiler; the backdoor modifications are then removed from the compiler source, they are no longer needed because the previously compiled compiler will spot when it is being used to compile its own source and generate the code necessary to propagate the backdoor code into the executable it creates.

How would the world counter the appearance of such a modified gcc? Obviously critical programs would need to be recompiled by a version of gcc that did not contain the backdoor. Today there are several companies and many amateur groups that distribute their own Linux distributions which they build from source. It should be relatively easy to obtain a usable executable of gcc from 10 years ago; remember what is needed is a version capable of compiling the latest gcc sources.

The ideal time to create a backdoor’ed version of gcc is while its development was under the control of one person, so early in the development history that all versions available anywhere are very likely to be derived from it. How can we prove that the original author of gcc did not do just this?

It could be argued that the very substantial changes to the gcc sources (most of the source has probably been rewritten several times) mean that the coding patterns searched for by the executable to detect that it is compiling itself have long gone and at some point the backdoor failed to propagate itself to the next executable.

Compilers other than gcc might also include backdoors that propagate themselves. However, the method of propagation is likely to be different. Compiling the gcc sources with a non-gcc compiler creates an executable that should exhibit the same behavior as a gcc-compiled executable. Differences in the behavior of these independently built executables is a cause for concern (one difference might be caused by differences in the conversion of floating-point literals, a recent PhD thesis provides more detail).

The problem with compiling the gcc sources is that they make use of language extensions that few, if any, other compilers support. I know IBM added modified one of their C compilers to support those gcc extensions needed to compile the Linux kernel, but I don’t know if this compiler is capable of compiling the gcc sources. The LLVM project intended to support many gcc extensions but I don’t know if they aim to be able to compile the gcc sources.

Another option is to compare the assembler generated when gcc compiles itself against the corresponding source code. A very expensive task for source code measured in hundreds of thousands of lines. Adding the necessary language extension support to another compiler would probably be cheaper and also create a tool that could be used to check future releases of gcc.

CPUs also exhibit hardware faults

The cpu is the one element of a computing platform that people rarely treat as a source of error caused by physically malfunction, i.e., randomly flipping a bit in a register or instruction pipeline. I once worked on a compiler for the Mototola 88000 using a test platform that contained alpha silicon (i.e., not yet saleable components where some of the instructions were known not to work; the generated assembler code was piped through a sed script that mapped these instructions into an alternative instruction sequence that did work) and the cpus in a few of the hardware updates turned out to be temperature sensitive; some of the instructions changed their behavior when they got too hot. People who write compilers using alpha silicon learn to expect this sort of thing.

Quite a bit has been published on faults in other hardware components. Some of the best recent empirical hardware fault data and analysis I have seen is that published by Google engineers on hard disc and dram memory fault occurrences in their server farms. They might have a problem publishing such results for the cpus they use because these commodity items generally don’t have the ability to report any detailed fault data, they just die or one of the programs being executed crashes.

As device fabrication continues to shrink erroneous behavior caused by cosmic ray impact will become more and more common. Housing a computer farm at a high altitude might not be a good idea (at 7500 ft cosmic ray-induced neutrons that can lead to soft errors are 6.4 times more common than at sea level).

IBM’s Power4 chip (“Power4 System Design for High Reliability” by Bossen, Tendler and Reick) is one of the few that provides error checking of cache contents, while IBM’s System z9 is one of the very few that provide parity checking on the cpu registers (“Enhanced I/O subsystem recovery and availability on the IBM System z9” by Oakes et al).

One solution to the problem of unreliable cpu behavior is for the compiler to insert consistency checks into the generated code. Two such checking methods are:

- ‘Signature Analysis’ which performs consistency checks between signatures calculated at compile time and runtime. A signature is associated with every basic block with the current signature being derived from the execution history. This technique can detect spurious changes to the flow of control caused by a hardware glitch.

- ‘Error Detection by Duplicated Instructions’ generates code which duplicates the behavior of some instruction sequence and compares the result calculated by both sequences, i.e., a source language construct is executed twice and an error raised if the results are different. The parallel instruction sequences use different sets of registers on the same cpu and ideally the instructions are scheduled to exploit instruction level parallelism

At the moment cosmic-ray induced hardware faults are probably very small change compared to faults in the code. Will code quality increase to the point where cosmic-ray faults become an issue or will devices get so small that they have to be lead lined to prevent background radiation corrupting them? Let the race begin.

Register vs. stack based VMs

Traditionally the virtual machine architecture of choice has been the stack machine; benefits include simplicity of VM implementation, ease of writing a compiler back-end (most VMs are originally designed to host a single language) and code density (i.e., executables for stack architectures are invariably smaller than executables for register architectures).

For a stack architecture to be an effective solution, two conditions need to be met:

- The generated code has to ensure that the top of stack is kept in sync with where the next instruction expects it to be. For instance, on its return a function cannot leave stuff lying around on the stack like it can leave values in registers (whose contents can simply be overwritten).

- Instruction execution needs to be generally free of state, so an add-two-integers instruction should not have to consult some state variable to find out the size of integers being added. When the value of such state variables have to be saved and restored around function calls, they effectively become VM registers.

Cobol is one language where it makes more sense to use a register based VM. I wrote one and designed two machine code generators for the MicroFocus Cobol VM and always find it difficult to explain to people what a very different kind of beast it is compared to the VMs usually encountered.

Parrot, the VM designed as the target for compiled PERL, is register based. A choice driven, I suspect, by the difficulty of ensuring a consistent top-of-stack and perhaps the dynamic typing of the language.

On register based cpus with 64k of storage, the code density benefits of a stack based VM are usually sufficient to cancel out the storage overhead of the VM interpreter and support a more feature rich application (provided speed of execution is not crucial).

If storage capacity is not a significant issue and a VM has to be used, what are the runtime performance differences between a register and stack based VM? Answering this question requires compiling and executing the same set of applications for the two kinds of VM. Something that until 2001 nobody had done, or at least not published the results.

A comparison of the Java (stack based) VM with a register VM (The Case for Virtual Register Machines) found that while the stack based code was more compact, fewer instructions needed to be executed on the register based VM.

Most VM instructions are very simple and take relatively little time to execute. When hosted on a pipelined processor the main execution time overhead of a VM is the instruction dispatch (Optimizing Indirect Branch Prediction Accuracy in Virtual Machine Interpreters) and reducing the number of VM instructions executed, even if they are larger and more complicated, can produce a worthwhile performance improvement.

Google has chosen a register based VM for its Android platform. While licensing issues may have been a consideration, there are a number of technical advantages to this decision:

- A register VM is likely to have an intrinsic performance advantage over a stack VM when hosted on a pipelined processor.

- Byte code verification is likely to be faster on a register VM (i.e., faster startup times) because stack height integrity checks will be greatly simplified.

- A register VM will be more forgiving of incorrect code (in the VM, generated by the compiler, code corrupted during program transmission or storage attacked by malware) than a stack VM.

Using Coccinelle to match if sequences

I have been using Coccinelle to obtain measurements of various properties of C if and switch statements. It is rare to find a tool that does exactly what is desired, but it is often possible to combine various tools to achieve the desired result.

I am interested in measuring sequences of if-else-if statements and one of the things I wanted to know was how many sequences of a given length occurred. Writing a pattern for each possible sequence was the obvious solution, but what is the longest sequence I should search for? A better solution is to use a pattern that matches short sequences and writes out the position (line/column number) where they occur in the code, as in the following Coccinelle pattern:

@ if_else_if_else @ expression E_1, E_2; statement S_1, S_2, S_3; position p_1, p_2; @@ if@p_1 (E_1) S_1 else if@p_2 (E_2) S_2 else S_3 @ script:python @ expr_1 << if_else_if_else.E_1; expr_2 << if_else_if_else.E_2; loc_1 << if_else_if_else.p_1; loc_2 << if_else_if_else.p_2; @@ print "--- ifelseifelse" print loc_1[0].line, " ", loc_1[0].column, " ", expr_1 print loc_2[0].line, " ", loc_2[0].column, " ", expr_2 |

noting that in a sequence of source such as:

if (x == 1) stmt_1; else if (x == 2) stmt_2; else if (x == 3) stmt_3; |

the tokens if (x == 2) will be matched twice, the first setting the position metavariable p_2 and then setting p_1. An awk script was written to read the Coccinelle output and merge together adjacent pairs of matches that were part of a longer if-else-if sequence.

The first pattern did not concern itself with the form of the controlling expression, it simply wrote it out. A second set of patterns was used to match those forms of controlling expression I was interested in, but first I had to convert the output into syntactically correct C so that it could be processed by Coccinelle. Again awk came to the rescue, converting the output:

--- ifelseifelse 186 2 op == FFEBLD_opSUBRREF 191 7 op == FFEBLD_opFUNCREF --- ifelseifelse 1094 3 anynum && commit 1111 8 ( c [ colon + 1 ] == '*' ) && commit |

into a separate function for each matched sequence:

void f_1(void) { // --- ifelseifelse /* 186 2 */ op == FFEBLD_opSUBRREF ; /* 191 7 */ op == FFEBLD_opFUNCREF ; } void f_2(void) { // --- ifelseifelse /* 1094 3 */ anynum && commit ; /* 1111 8 */ ( c [ colon + 1 ] == '*' ) && commit ; } |

The Coccinelle pattern:

@ if_eq_1 @ expression E_1; constant C_1, C_2; position p_1, p_2; @@ E_1 == C_1@p_1 ; E_1 == C_2@p_2 ; @ script:python @ expr_1<< if_eq_1.E_1; const_1 << if_eq_1.C_1; const_2 << if_eq_1.C_2; loc_1 << if_eq_1.p_1; loc_2 << if_eq_1.p_2; @@ print loc_1[0].line, " ", loc_1[0].column, " 3 ", expr_1, " == ", const_1 print loc_2[0].line, " ", loc_2[0].column, " 2 ", expr_1, " == ", const_2 |

matches a sequence of two statements which consist of an expression being compared for equality against a constant, with the expression being identical in both statements. Again positions were written out for post-processing, i.e., joining together matched sequences.

I was interested in any sequence of if-else-if that could be converted to an equivalent switch-statement. Equality tests against a constant is just one form of controlling expression that meets this requirement, another is the between operation. Separate patterns could be written and run over the generated C source containing the extracted controlling expressions.

Breaking down the measuring process into smaller steps reduced the amount of time needed to get a final result (with Coccinelle 0.1.19 the first pattern takes round 70 minutes, thanks to Julia Lawall‘s work to speed things up, an overhead that only has to occur once) and allows the same controlling expression patterns to be run against the output of both the if-else-if and if-if patterns.

At the end of this process I ended up with a list information (line numbers in source code and form of controlling expression) on if-statement sequences that could be rewritten as a switch-statement.

GLR parsing is the future

Traditionally parser generators have required that their input grammar be LALR(1) or some close variant (I would include LL(1) in this set). Back when 64k was an unimaginably large amount of memory being able to squeeze parser tables in a few kilobytes was very important; people received PhDs on parser table compression.

There is still a market for compact, fast parsers. Formal language grammars abound in communication protocols and vendors of communications hardware are very interested in keeping down costs by using minimizing the storage needed by their devices.

The trouble with LALR(1) is that value 1. It means that the parser only looks ahead one token in the input stream. This often means that a grammar is flagged as being ambiguous (i.e., it contains shift/reduce or reduce/reduce conflicts) when it is actually just locally ambiguous, i.e., reading tokens further head on the input stream would provide sufficient context to unambiguously specify the appropriate grammar production.

Restructuring a grammar to make it LALR(1) requires a lot of thought and skill and inexperienced users often give up. I once spent a month trying to remove the conflicts in the SQL/2 grammar specified by the SQL ISO standard; I managed to get the number down from over 1,000 to a small number that I decided I could live with.

It has taken a long time for parser generators to break out of the 64k mentality, but over the last few years it has started to happen. There have been two main approaches: 1) LR(n) provides a mechanism to look further ahead than one token, ie,

I think that GLR parsing is the future for two reasons:

- It is supported by the most widely used parser generator, bison.

- It enables working parsers to be created with much less thought and effort than a LALR(1) parser. (I don’t know how it compares against LR(n)).

GLR parsers resolve any language ambiguities by effectively delaying decisions until runtime in the hope that reading enough tokens will resolve local ambiguities. If an ambiguity in the token stream cannot be resolved a runtime error occurs (this is the one big downside of a GLR parser, the parser generated by a LALR(1) parser generator may produce lots of build time warnings but never produces errors when the parser is executed).

One example of a truly ambiguous construct (discussed here a while ago) is:

x * y; |

which in C/C++ could be a declaration of y to be a pointer to x, or an expression that multiplies x and y.

Tools that can detect these global ambiguities in a grammar are starting to appear, e.g., DTWA is a bison extension.

I reviewed an early draft of the new O’Reilly book “flex & bison” and tried to get the author to be more upbeat on GLR support in bison; I think I got him to be a bit less cautious.

To if-else-if or if-if, that is the question

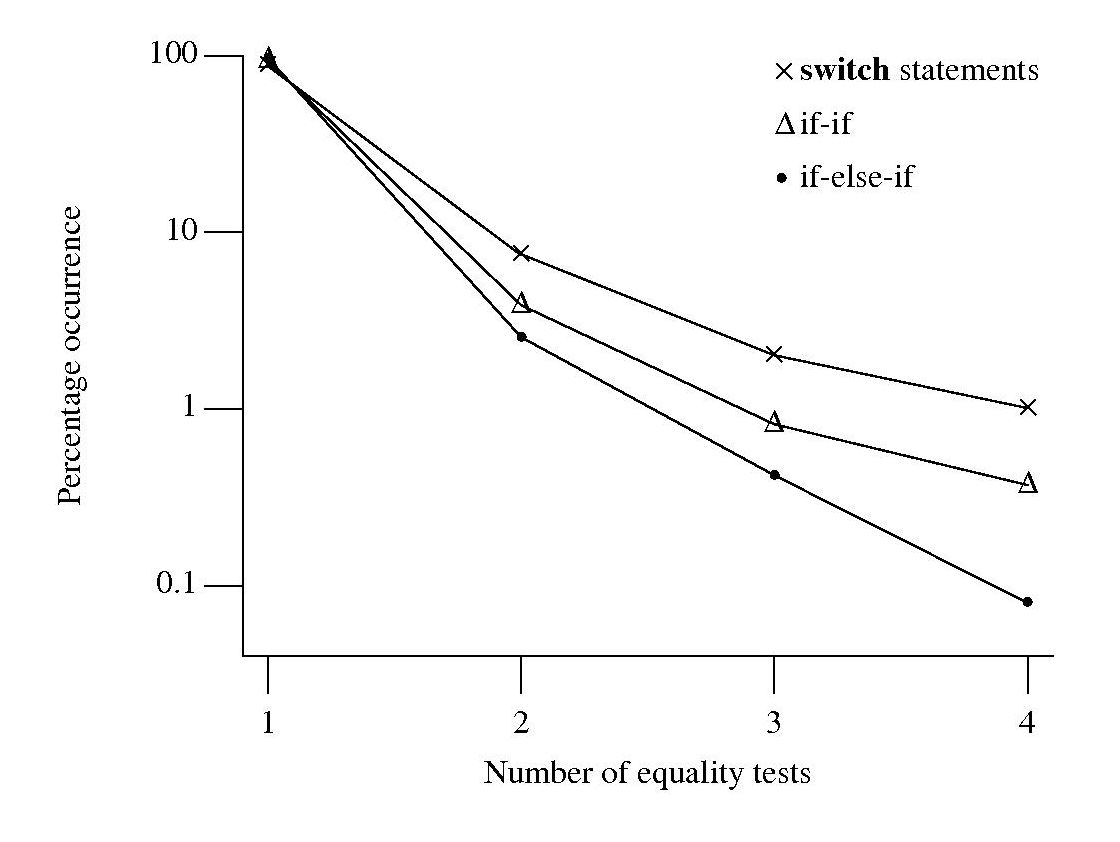

I am currently measuring if-statements, occurring in visible source, that might be mapped to an equivalent switch-statement. The most obvious usage to look for is a sequence of if-else-if statements that all involve the same expression being tested against an integer constant, as in

if (x == 1) stmt_1; else if (x == 2) stmt_2; else if (x == 3) stmt_3; |

Another possible sequence is:

if (x == 1) stmt_1; if (x == 2) stmt_2; if (x == 3) stmt_3; |

provided all but the last conditionally executed arms do not change the value of the common control variable (e.g., x).

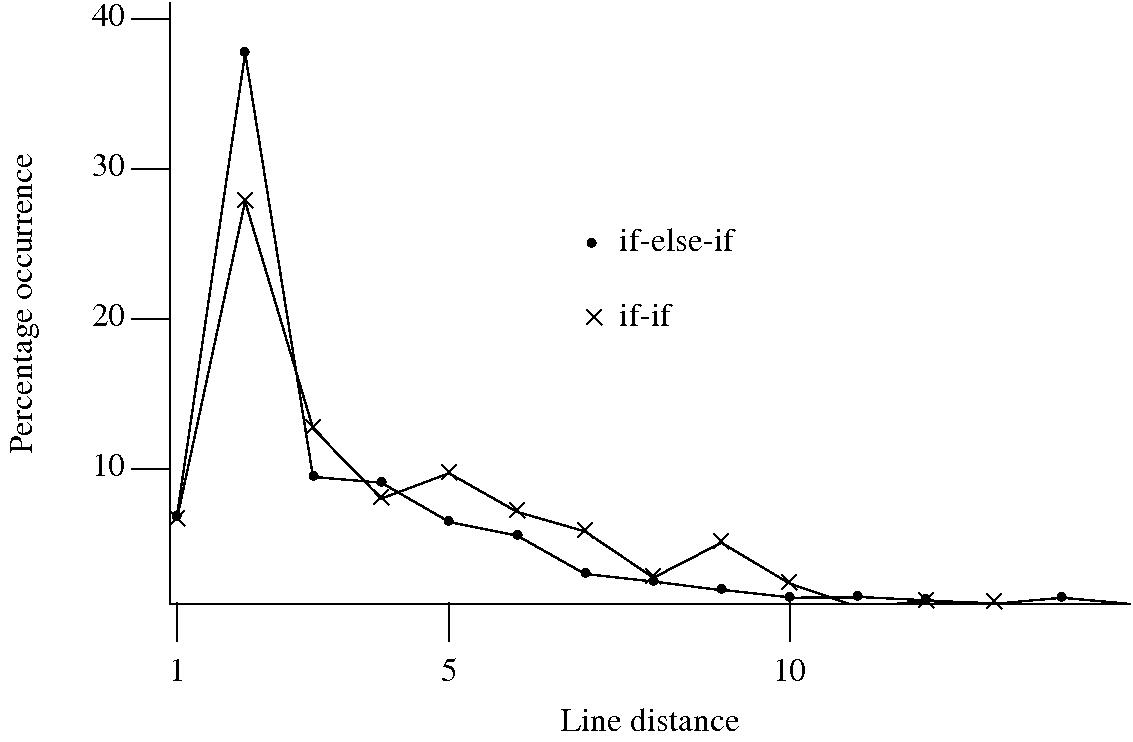

I started to wonder about what would cause a developer to chose one of these forms over the other. Perhaps the if-if form would be used when it was obvious that the common conditional variable was not modified in the conditionally executed arm. This would imply that there would be more statements in the arms of if-else-if sequences than if-if sequences. The following plot of percentage occurrence (over all detected if-else-if/if-if forms) of line number difference between pars of associated if-statements (e.g., when the controlling expression occurs on line

Just over a quarter of the arms contain a single statement (or to be exact the code is contained on a single line); this suggests that when using the if-else-if form most developers put the else and if on the same line. At the next distance along the percentage of if-else-if forms is twice as great as the if-if, probably because of else and if appearing on separate lines (as in the introductory example) in one case and less frequently a comment/blank line in the other. Next along, why the big increase in if-if forms? A comment + blank line, or perhaps no comment or blank line but the use of curly brackets (this is too off the track of where I am supposed to be going to investigate).

This morning I realized why the original plot did not look right, one of the data sets was a way off adding to 100%. An updated version has been uploaded.

It turns out that a single statement (or at least a single line) is more common in the if-else-if form, the opposite of what I had expected. At slightly larger distances there are still differences that can be attributed to else and if appearing on separate lines, curly brackets and a comment/blank line, but the effect is not as large as seen in the original, less accurate, plot.

I have a feeling that I ought to say something about the if-else-if form being preferred to the if-if form. One of the forms will have its behavior changed if the common control variable is modified in one of its arms. But is this an intended or unintended behavior? What is the typical characteristic usage of a common control variable, e.g., do they tend to be accessed but not modified in a given function definition? At the moment I see no obvious cost or benefit strongly favoring one usage over the other, so I will remain silent on the issue.

Implementing the between operation

What code do developers write to check whether a value lies between two bounds (i.e., a between operation)? I would write (where MIN and MAX might be symbolic names or numeric literals):

if ( x >= MIN && x <= MAX ) |

that is I would check the lowest value first. Performing the test in this order just seems the natural thing to do, perhaps because I live in a culture that writes left to write and a written sequence of increasing numbers usually has the lowest number on the left.

I am currently measuring various forms of if-statement conditional expressions that occur in visible source as part of some research on if/switch usage by developers and the between operation falls within the set of expressions of interest. I was not expecting to see any usage of the form:

if ( x <= MAX && x >= MIN ) |

that is with the maximum value appearing first. The first program measured threw up seven instances of this usage, all with the minimum value being negative and in five cases the maximum value being zero. Perhaps left to right ordering still applied, but to the absolute value of the bounds.

Measurements of the second and subsequent programs threw up instances that did not follow any of the patterns I had dreamt up. Of the 326 between operations appearing in the measured source 24 had what I consider to be the unnatural order. Presumably the developers using this form of between consider it to be natural, so what is their line of thinking? Are they thinking in terms of the semantics behind the numbers (in about a third of cases symbolic constants appear in the source rather than literals) and this semantics has an implied left to right order? Perhaps the authors come from a culture where the maximum value often appears on the left.

Suggestions welcome.

Recent Comments